New in Confluent Cloud: Making Data & Pipelines Accessible for AI-Ready Streaming | Learn More

120+ PRE-BUILT CONNECTORS

Connect Your Entire Business, Faster

Break data silos with 120+ pre-built connectors for any data source or sink.

Save 3-6 months of development per connector

Modernize your tech stack by bridging legacy & cloud systems

Reduce ops burden, risk, & TCO with fully managed connectors

Kafka Connect is the open standard for integrating data to and from Apache Kafka®.

Confluent takes Kafka Connect further by offering the market's largest portfolio of 120+ pre-built connectors—all backed by enterprise-grade security, reliability, and support to eliminate risk and operational burden.

Build GenAI Apps Faster With Connectors

Learn how you can build a real-time knowledge base for RAG architecture

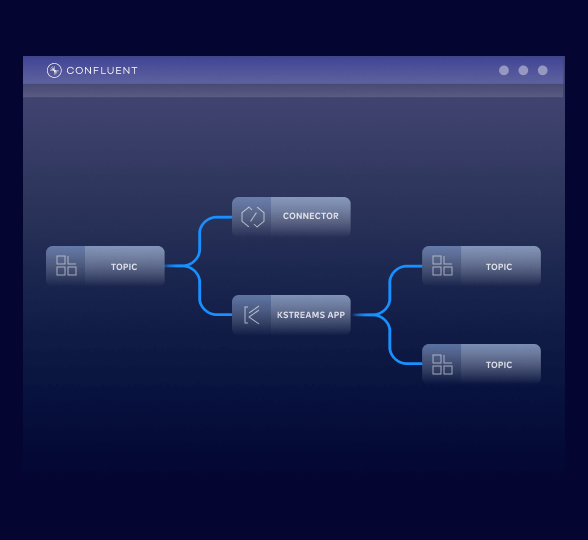

Integrate Data Without Code

Free your teams from writing generic integration code and managing connectors.

Simply define the connector, identify its sources and sinks, and start streaming within your private network––via config file, UI, or API.

Confluent provides 120+ integrations with:

Pre-built connectors

Avoid 3-6 engineering months of designing, building, and testing each connector

Fully managed connectors

Launch in minutes without operational overhead, reducing your total cost of ownership

Custom connectors

Bring your own connectors for custom apps and let us manage the Connect infrastructure

Enterprise-grade security & support

Power mission-critical workloads with private networking, 99.99% uptime SLA, RBAC, and expert support

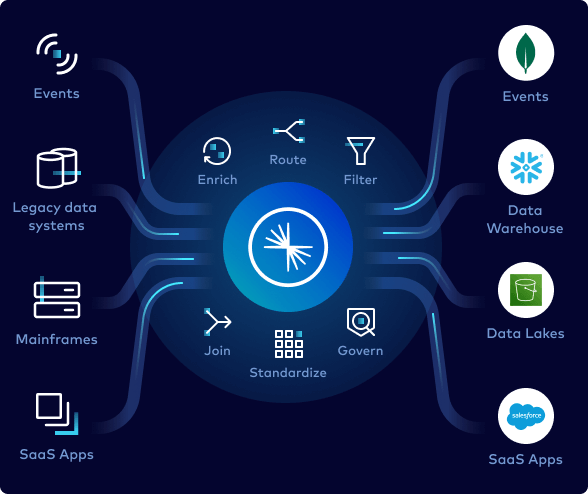

Modernize Your Tech Stack

Easily build data streaming pipelines using connectors to bridge legacy systems to modern, cloud technologies.

Confluent’s connectors include:

Databases

Accelerate and de-risk your migration from legacy databases to cloud-native ones with CDC connectors

Data warehouses

Stream real-time data into cloud data warehouses so data teams work with the latest information

Data lakes

Populate your data lakes and simplify your data architecture by decoupling data sources and sinks

SaaS apps

Connect all of your SaaS apps so that data from any part of the business is available for all to use

Simplify Day 2 Operations

Confluent provides extensive control and visibility across your environment.

You can streamline your workflow with:

AWS IAM AssumeRole

Easily and securely manage access to AWS resources across an organization with temporary credentials

Instant config validations

Receive feedback and error alerts for field inputs to successfully launch connectors

Client-side field level encryption

Supporting highly sensitive workloads by enabling encrypting and decrypting of records within the connector

Custom single message transforms

Perform lightweight data transformations like masking and filtering in flight within the source or sink connector before stream processing

Logs & metrics monitor

View connector events in the console for contextual information and error debugging

“With its powerful, fully managed connectors, we effortlessly stream data into destinations like Amazon S3, making real-time event logging scalable and efficient… Confluent has a vast library of pre-built connectors that allow us to quickly prototype before deciding on a final path.”

EVO Banco was able to access a wide range of data sources via virtually unlimited connectors. Configuration was simple; just specify the type of data source required and provide the connection details. In this way, the company was able to start channeling data instantly and seamlessly.

“Confluent Fully Managed Connectors allow us to innovate faster… we can actually invest our development resources into building new capabilities instead of custom integrations to common data sources.”

New developers get $400 in credits during their first 30 days—no sales rep required.

Confluent provides everything you need to:

- Build with client libraries for languages like Java and Python, sample code, 120+ pre-built connectors, and a Visual Studio Code extension.

- Learn from on-demand courses, certifications, and a global community of experts.

- Operate with a CLI, IaC support for Terraform and Pulumi, and OpenTelemetry observability.

You can sign up with your cloud marketplace account below, or directly with us.

Confluent Cloud

A fully managed, cloud-native service for Apache Kafka®

Confluent Connectors | FAQS

Can I modernize legacy systems with Confluent connectors?

Confluent offers a portfolio of more than 120 pre-built, enterprise grade connectors that make it easy for you to connect external systems to your Kafka deployments. By taking advantage of these connectors, you no longer have to write, test, and maintain integration code just to get your data into and out of Kafka topics.

That means you can easily modernize legacy systems with Confluent—easily connect your legacy databases, mainframes, and messaging systems to cloud data services and platforms with Confluent as your intermediary data access layer.

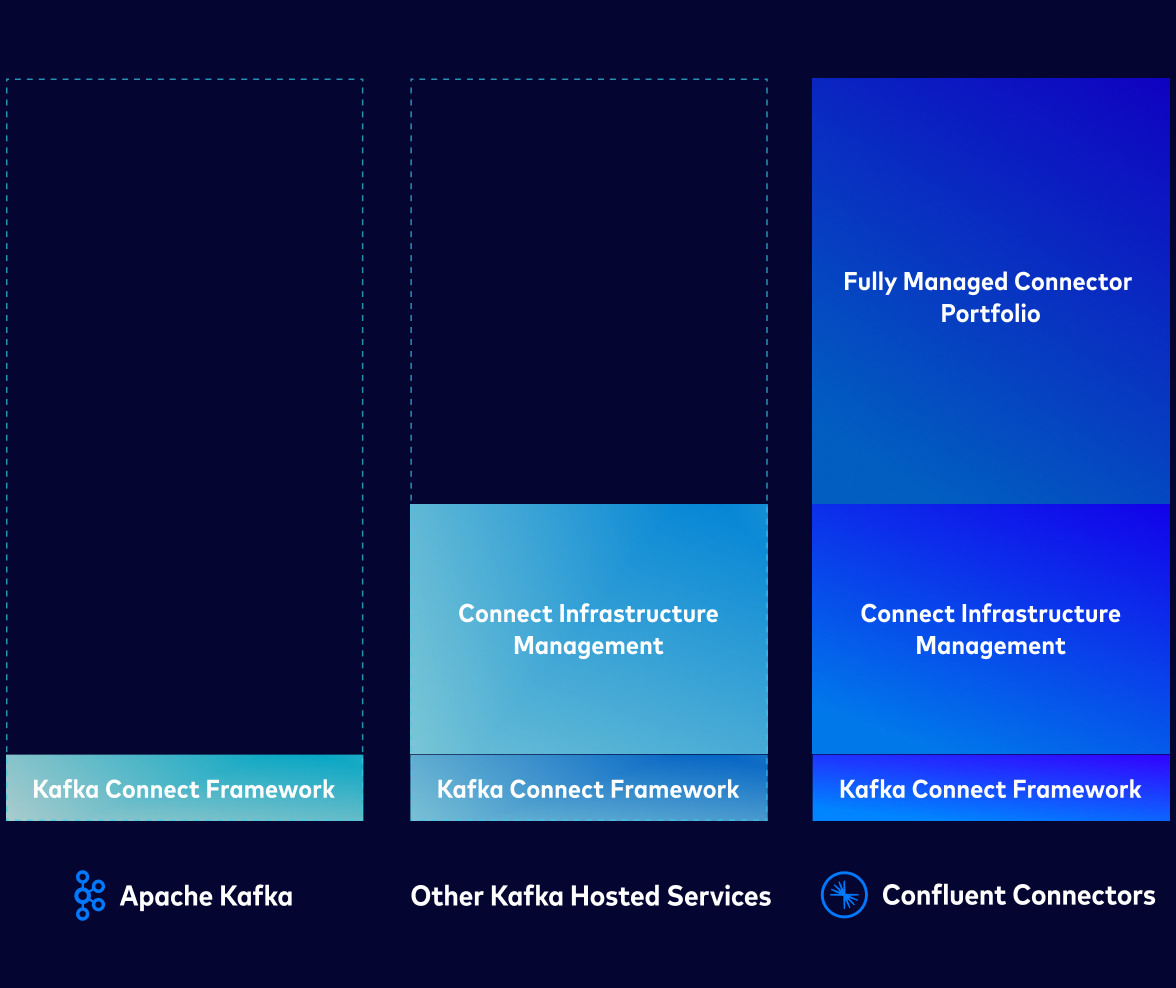

What makes Confluent-managed connectors different from open source Kafka Connect?

Confluent offers 80+ fully managed connectors, which means that you don’t have to manage connector plugins or their underlying infrastructure and can offload provisioning, upgrades, and patching.

Confluent-managed connectors eliminate integration overhead for your Kafka deployments and come with a 99.99% uptime SLA for production workloads. As a result, using these connectors frees your engineering teams from the burden of managing integration code and infrastructure. Instead, your teams can focus on building applications and unlocking the value of your data.

Other key operational benefits include:

- Seamless Upgrades: Features like plugin versioning for custom connectors allow for smooth upgrades without losing offsets or disrupting service.

- Improved Troubleshooting: Non-admin roles can now access connector logs, empowering them to manage and diagnose their own connectors without escalating to an administrator.

- Less Maintenance: Confluent manages all the underlying infrastructure so your team doesn't have to worry about provisioning, patching, or scaling the Connect environment.

How do Confluent connectors help reduce total cost of ownership (TCO)?

Confluent's fully managed connectors help lower your Kafka TCO by eliminating the costs associated with building and maintaining the integration code in connector plugins and from self-managing a Kafka Connect environment.

Key savings come from:

- Cost-Efficient Infrastructure Scaling: Elastic scaling for all Confluent connectors and autoscaling with fully managed connectors enables you to scale and consume compute resources more efficiently.

- Reduced Operational Burden: With fully managed connectors, you save on the engineering time and resources needed for maintenance, troubleshooting, and upgrades. High-availability reduces the business impact of unplanned pipeline downtime.

- Increased Developer Productivity: With simplified, secure streaming integration, your developer teams spend less time blocked by data access issues and can dedicate more resources to building real-time applications and event-driven systems across the organization. And using pre-built connectors can help cut 3-6 months from your production timelines.

How does Confluent’s price guarantee apply to connectors?

Confluent will beat or match the price of Kafka services from your hyperscaler, allowing you to save money on your streaming workloads—including when it comes to your Connect tasks.

Visit the Confluent Cost Estimator to see how much you can save as you scale seamlessly and cost-effectively with Confluent. Explore the volume-based discounts that you can access for Connect tasks with annual commitments for Confluent Cloud.

What security and compliance features are available for sensitive data?

Confluent connectors are built with enterprise-grade features for security, reliability, and developer productivity including secure private networking configurations, built in single message transforms (SMTs), and more.

Confluent helps ensure that the streaming data pipelines you build are secure and compliance, with purpose-built features for handling sensitive data:

- Granular Access Control: Enhanced role-based access control (RBAC) allows you to grant users the specific permissions they need to manage connectors without giving them full admin rights.

- Secure Networking: You can connect to data systems in private networks using features like DNS Forwarding and Egress PrivateLink, restricting traffic from the public internet.

- Data Masking: You can use single-message transforms (SMTs) to perform lightweight transformations on connector workloads, including masking sensitive data field within a data stream.

- Data Encryption: Specific connectors, like the one for Oracle XStream, support client-side field level encryption (CSFLE) to ensure data remains secure continuously.

- Advanced Authentication: Many connectors support modern, secure authentication methods like AWS IAM AssumeRole, which removes the need for long-lived access keys. Others support protocols like OAuth2 and SSO integration.

How quickly can I launch a connector in Confluent Cloud?

You can launch fully managed connectors in seconds with no-code provisioning and real-time configuration checks, Simply find the fully managed connector you need on Confluent Cloud and hit launch or access them directly from your Confluent Cloud account.

Self-managed connectors require more initial setup to run alongside your cloud deployment. Search Confluent Hub to find self-managed Confluent, partner, and community-built connectors and then visit Docs to learn how to connect self-managed Kafka Connect to Confluent Cloud.

Do I need to browse the Connector Hub to get started?

While you don't need to browse Confluent Hub to get started, it is a great way to see all the options available for your self-managed and fully managed Kafka deployments. The Hub helps you discover what integrations are available via Confluent, as well as find open source and partner-verified connectors beyond the 120+ that we offer.

If you’re looking specifically for fully managed connectors to use on AWS, Microsoft Azure, or Google Cloud, you can log in to the Cloud Console and launch connectors in just a few clicks.

Can I bring my own custom connector to Confluent Cloud?

Yes, you can bring your custom and in-house built connectors to Confluent Cloud. The platform now supports plugin versioning for custom connectors, which allows you to upload a new version of your plugin and seamlessly direct active connectors to it, resuming from the last offset. This is managed through the new Custom Connect Plugin Management (CCPM) APIs.

How do Confluent connectors support real-time AI and analytics initiatives?

Confluent connectors are the foundational layer for real-time AI and analytics, as they feed live data streams from across your business into the platforms where this analysis occurs. Capture changes as they happen from databases like PostgreSQL and Oracle using Confluent’s CDC connectors.

Then, stream the real-time events needed for AI/ML models and analytics dashboards to understand and act on the state of the business. Combined with sink connectors for major analytics and data warehouse platforms—such as Snowflake and Amazon Redshift—this allows you to stream processed, enriched data directly into these systems for immediate use. This ability to build real-time pipelines is essential for unlocking new AI and analytics use cases.