[Webinar] Master Apache Kafka Fundamentals with Confluent | Register Now

Baader’s Journey in Crafting an IoT-Driven Food Value Chain with Data Streaming

While the current hype around the Internet of Things (IoT) focuses on smart “things”—smart homes, smart cars, smart watches—the first known IoT device was a simple Coca-Cola vending machine at Carnegie Mellon University in Pittsburgh.

Students in the 1980s, tired of long walks to an empty machine, installed a board that tracked the machine’s sensors to determine whether the machine was stocked and the bottles were cold. They ran a line from the board to a computer connected to ARPANET, the network that would become the internet, and wrote a program so any computer connected to ARPANET or the university’s ethernet could check the machine’s inventory.

Fast forward a few decades. The idea those students had about tracking inventory with sensors is now widely practiced by soda companies. In fact, IoT is reshaping entire industries. And Confluent Cloud and Apache Kafka® are at the center of this transformation.

“Kafka is business critical for us. So working with Confluent Cloud and the Kafka experts was an obvious choice,” says Stefan Frehse, Software Architect, Digitalization, for food processing machinery company BAADER.

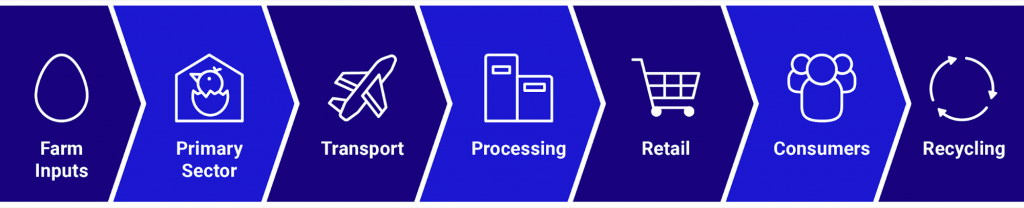

Frehse and his team are using Confluent Cloud and associated IoT technologies to build a data-driven food value chain for all their partners from farm to fork. And they believe they are the only company in the industry doing so at such massive scale horizontally across all members of the value chain. By capturing data throughout the chain, BAADER can give factories the ability to improve animal welfare, track trucks and identify machines that need maintenance.

Eventually, they want to give consumers the opportunity to provide feedback on food quality so each party along the value chain can improve their operations where necessary.

Retail is another industry being disrupted by IoT and Confluent Cloud. Los-Angeles-based Mojix develops a retail and supply chain IoT platform built on Confluent Cloud to provide retailers insight into their real-time inventory so they can improve the omnichannel, i.e., online, physical store, or by phone, customer experience.

With a modern approach to the problem that the Carnegie Mellon students were solving, Mojix ingests data from RFID readers, camera sensors, beacons, mobile devices and routers into Confluent Cloud so that retailers can store, analyze and act on inventory data through its ViZix® item chain management platform. While traditional inventory accuracy can be as low as 65%, Mojix customers have seen inventory accuracy as high as 99%.

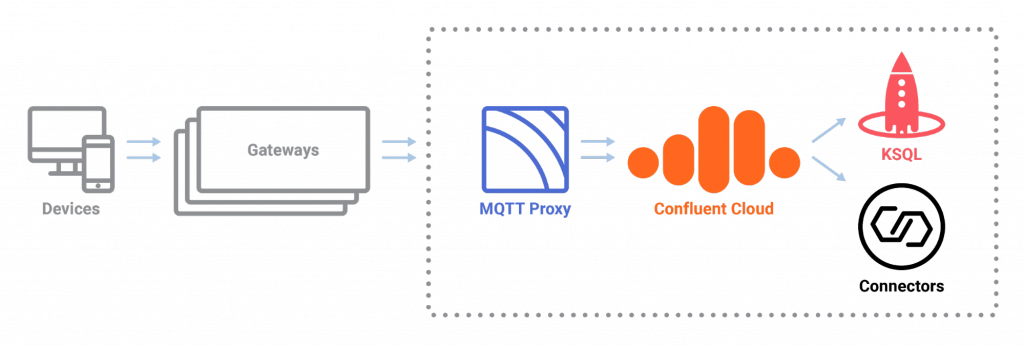

Confluent Platform enables IoT-based disruption and innovation in two key ways. First, it provides a highly scalable, real-time pipeline to collect, transport and store IoT events globally. Second, it provides key frameworks and APIs like Kafka Connect, KSQL and Kafka Streams to build an entirely new class of applications incorporating both real-time and stored data.

Of course any competent organization is able to stand up their own cloud instances and run Confluent Platform on hosted infrastructure, but in so many cases it is better not to take on that operational burden yourself. According to Frehse, Confluent Cloud—Confluent Platform as a resilient, scalable, event streaming service—provides all the tools BAADER needs to manage vast amounts of IoT event streams so they can turn it all into insights for themselves, partners and consumers.

Traditional repositories would be overwhelmed by the sheer volume and speed of IoT data. There are many types of connected devices sending data in different formats, so there is no traditional one-size-fits-all solution that BAADER could plug and play.

Confluent Cloud enables developers to address all of their IoT-related challenges:

- Data volumes are less of a concern. Confluent Cloud ensures mission-critical reliability at massive scale and also guarantees 99.95% uptime.

- With its vast library of connectors along with KSQL—Confluent’s streaming SQL engine for Apache Kafka—Confluent Cloud enables you to connect all your apps and events, analyze them, derive efficiencies and share insights. This is critical in industries where data is shared between suppliers, transportation companies, raw goods processors, retailers and consumers.

- Confluent Cloud along with Kafka Streams and KSQL enables continuous event stream processing such as filtering and aggregation to retain only the most valuable data as well as actionable insights, such as malfunction detection and alerting in real time.

- Confluent Cloud enables you to stream across hybrid and multi-cloud environments. This is important when IoT data is shared across dissimilar environments and diverse geographies. With Confluent Cloud, you can build a persistent bridge from on prem to cloud with the industry’s only hybrid Kafka service, or you can stream across public clouds for multi-cloud data pipelines.

Mojix leverages the Confluent Cloud event streaming service to stream higher-level events from hundreds of on-prem Kafka brokers to a centrally managed cloud infrastructure. Their ViZix platform supports real-time operational intelligence with complex event processing for a hybrid cloud across the edge—at retail stores and distribution centers—and the cloud.

“We use the same kinds of Kafka Streams applications, complex event processing, transformations and connectors in the cloud as we do on the edge,” says Gustavo Rivera, senior vice president, software development, at Mojix. “We’ve extended everything elegantly right to the edge, so we can deploy to containers at thousands of retail locations and maintain a secure connection up to the cloud. We can decide which rules we want to have on the edge and which in the cloud, and every time we improve our Kafka architecture or our Streams apps, all of the new capabilities are immediately available across the entire platform.”

Back in the food industry, BAADER is leveraging IoT and Confluent Cloud to go beyond their machinery business and transform into a modern, digital, full solution provider along the entire food value chain from farm to consumers, all the way to recycling.

BAADER collects events from throughout the food value chain with Kafka and Confluent Cloud to analyze detailed data around food safety, animal welfare, transportation details, processing machinery data, product quality and processing efficiency. BAADER processes and analyzes events with KSQL, then leverages connectors to visualize the results with Elasticsearch and Kibana. They transparently share all of this event data and insights with partners throughout the food value chain to drive new business.

Frehse cites several key benefits to creating this IoT-based, data-driven food value chain in Confluent Cloud:

- His team offloads management of Kafka across both development and production to leading Kafka experts at Confluent

- The team found KSQL to be the easiest and the most ideal engine for writing streaming applications on Kafka

- The library of connectors available to use with Confluent Cloud enabled many options for pushing events and insights to partners and customers

- BAADER needed to configure only a few parameters (throughput and retention time) and could leave all other details for the Kafka experts to manage

Retail and food & beverage are just two examples of Confluent Cloud at the heart of efforts leveraging IoT and transforming entire industries. If you’d like to learn more about Mojix and their real-time retail and supply chain IoT platform, please read this case study. Expect to soon see their platform making its way into industries such as manufacturing, energy and healthcare.

For more details on BAADER and how they are revolutionizing food preparation with IoT and Confluent Cloud, check out this presentation, and stay tuned for an upcoming blog post specifically focused on their use case and how they are implementing it with Confluent Cloud.

You can also learn more about Confluent Cloud, a fully managed, complete event streaming service based on Apache Kafka. Use the promo code CL60BLOG to get an additional $60 of free Confluent Cloud usage.*

Related content

Did you like this blog post? Share it now

Subscribe to the Confluent blog

3 Strategies for Achieving Data Efficiency in Modern Organizations

The efficient management of exponentially growing data is achieved with a multipronged approach based around left-shifted (early-in-the-pipeline) governance and stream processing.

Chopped: AI Edition - Building a Meal Planner

Dinnertime with picky toddlers is chaos, so I built an AI-powered meal planner using event-driven multi-agent systems. With Kafka, Flink, and LangChain, agents handle meal planning, syncing preferences, and optimizing grocery lists. This architecture isn’t just for food, it can tackle any workflow.