[Virtual Event] Agentic AI Streamposium: Learn to Build Real-Time AI Agents & Apps | Register

Stream Processing

Introducing Confluent Private Cloud: Cloud-Level Agility for Your Private Infrastructure

Confluent Private Cloud (CPC) is a new software package that extends Confluent’s cloud-native innovations to your private infrastructure. CPC offers an enhanced broker with up to 10x higher throughput and a new Gateway that provides network isolation and central policy enforcement without client...

Queues for Apache Kafka® Is Here: Your Guide to Getting Started in Confluent

Confluent announces the General Availability of Queues for Kafka on Confluent Cloud and Confluent Platform with Apache Kafka 4.2. This production-ready feature brings native queue semantics to Kafka through KIP-932, enabling organizations to consolidate streaming and queuing infrastructure while...

New in Confluent Intelligence: A2A, Multivariate Anomaly Detection, Vector Search for Cosmos DB, Amazon S3 Vectors, and More

Explore new Confluent Intelligence features: A2A integration, multivariate anomaly detection, vector search for Cosmos DB and S3 Vectors, Private Link, and MCP support.

Introducing KSQL: Streaming SQL for Apache Kafka

Note ksqlDB is the successor to KSQL. Read the announcement to learn more. To get started with ksqlDB in Confluent Cloud, you can sign up for fully managed Apache Kafka […]

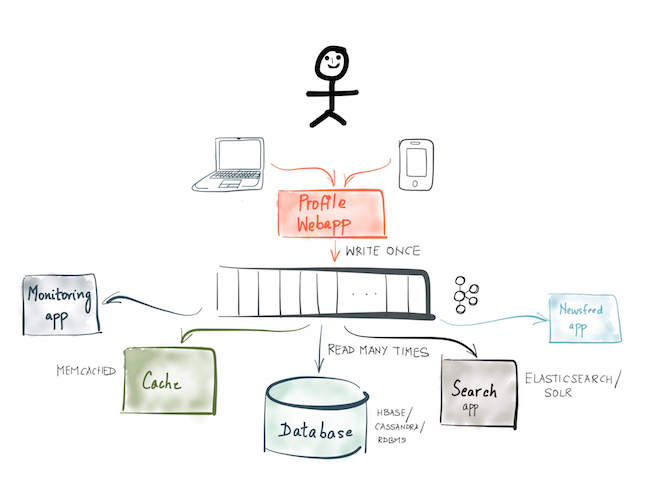

Leveraging the Power of a Database ‘Unbundled’

When you build microservices using Apache Kafka®, the log can be used as more than just a communication protocol. It can be used to store events: messaging that remembers. This […]

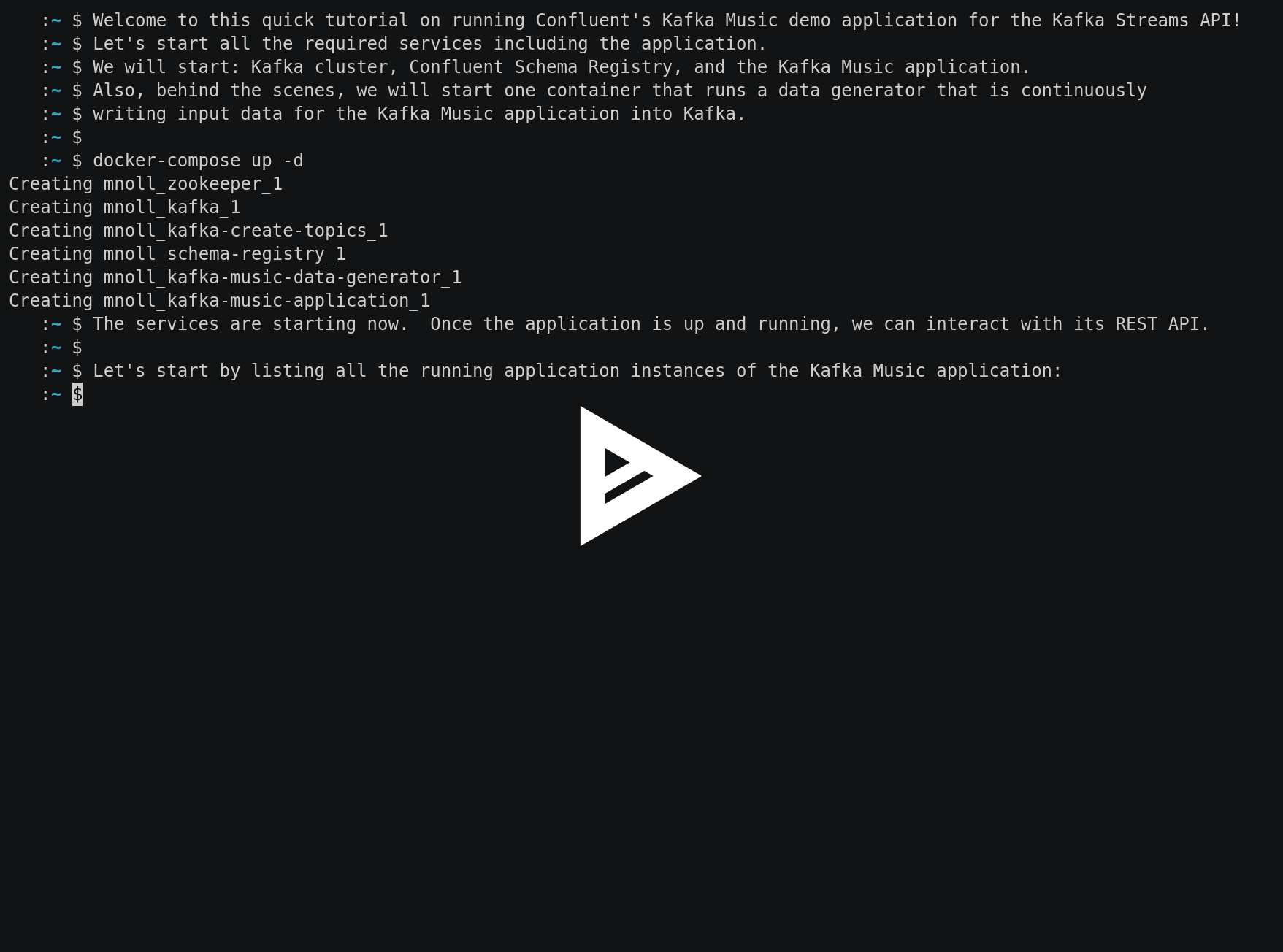

Getting Started with the Kafka Streams API using Confluent Docker Images

Introduction What’s great about the Kafka Streams API is not just how fast your application can process data with it, but also how fast you can get up and running […]

Watermarks, Tables, Event Time, and the Dataflow Model

The Google Dataflow team has done a fantastic job in evangelizing their model of handling time for stream processing. Their key observation is that in most cases you can’t globally […]

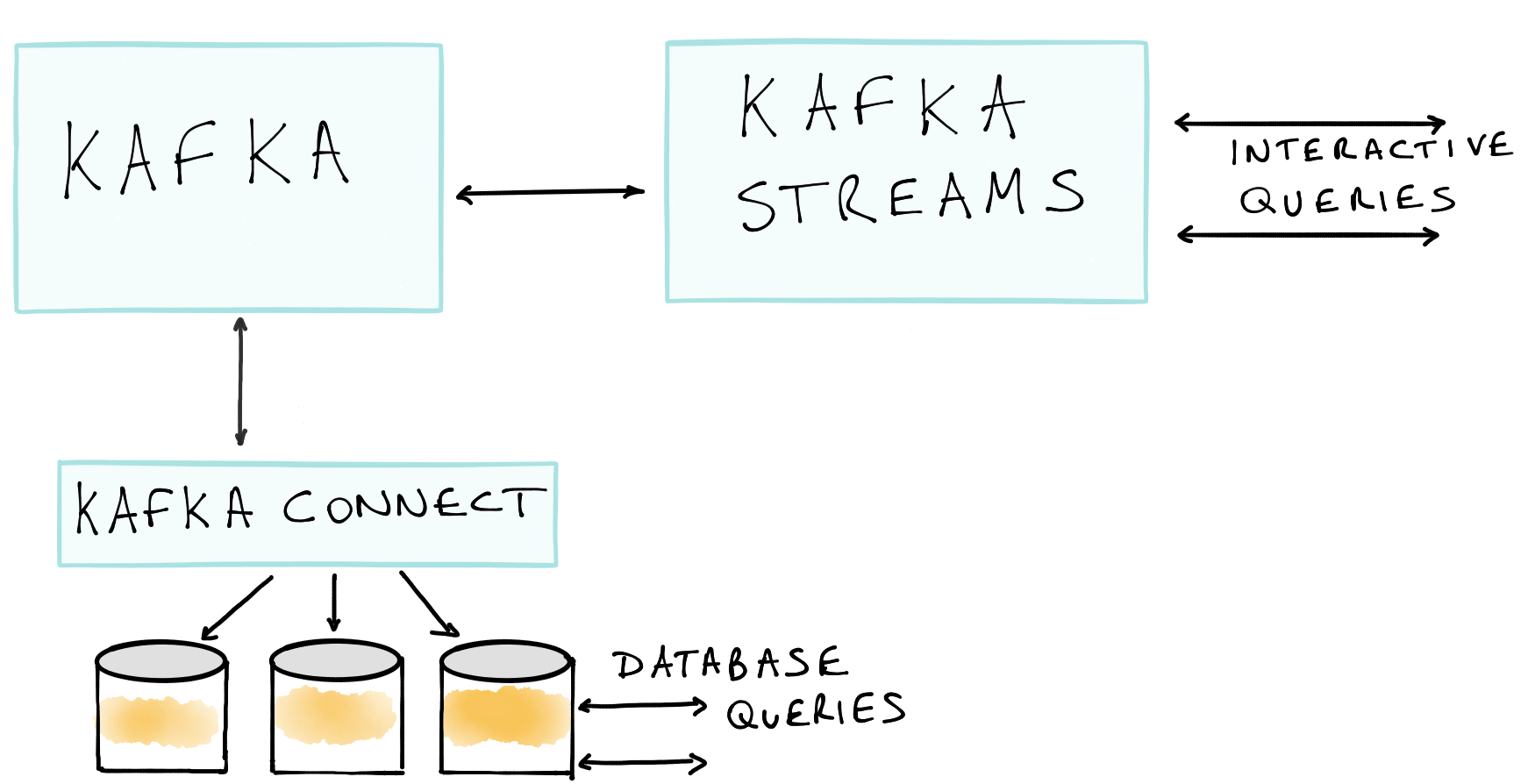

Unifying Stream Processing and Interactive Queries in Apache Kafka

This post was co-written with Damian Guy, Engineer at Confluent, Michael Noll, Product Manager at Confluent and Neha Narkhede, CTO and Co-Founder at Confluent. We are excited to announce Interactive […]

Event sourcing, CQRS, stream processing and Apache Kafka: What’s the connection?

Event sourcing as an application architecture pattern is rising in popularity. Event sourcing involves modeling the state changes made by applications as an immutable sequence or “log” of events. Instead […]

Flink vs Kafka: a Complete Comparison

This blog post is written jointly by Stephan Ewen, CTO of data Artisans, and Neha Narkhede, CTO of Confluent. Stephan Ewen is PMC member of Apache Flink and co-founder and CTO […]

Data Reprocessing with the Streams API in Kafka: Resetting a Streams Application

This blog post is the third in a series about the Streams API of Apache Kafka, the new stream processing library of the Apache Kafka project, which was introduced in Kafka v0.10.

Secure Stream Processing with the Streams API in Kafka

This blog post is the second in a series about the Streams API of Apache Kafka, the new stream processing library of the Apache Kafka project, which was introduced in Kafka v0.10. Current […]

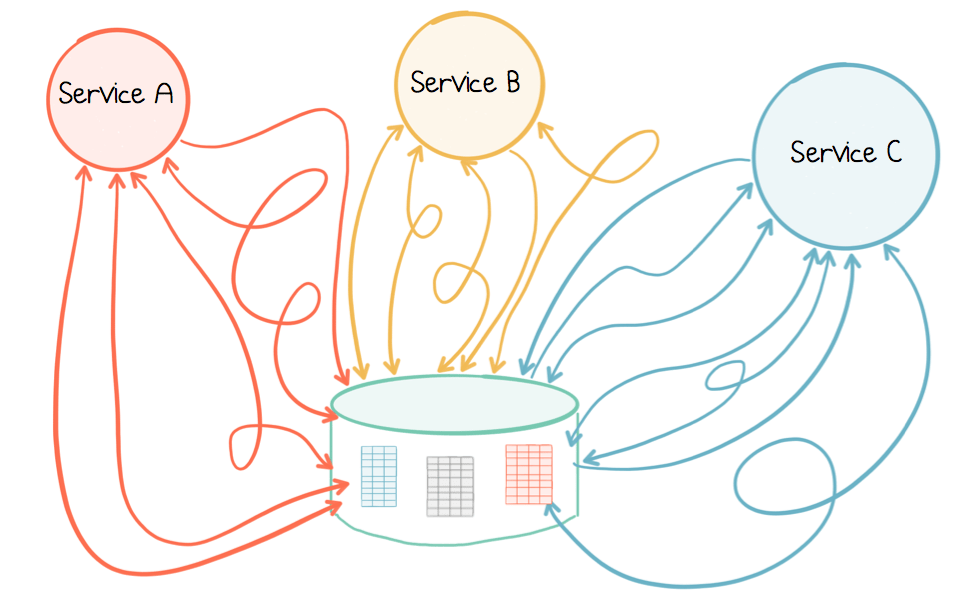

Elastic Scaling in the Streams API in Kafka

This blog post is the first in a series about the Streams API of Apache Kafka, the new stream processing library of the Apache Kafka project, which was introduced in Kafka v0.10. Current […]

Distributed, Real-time Joins and Aggregations on User Activity Events using Kafka Streams

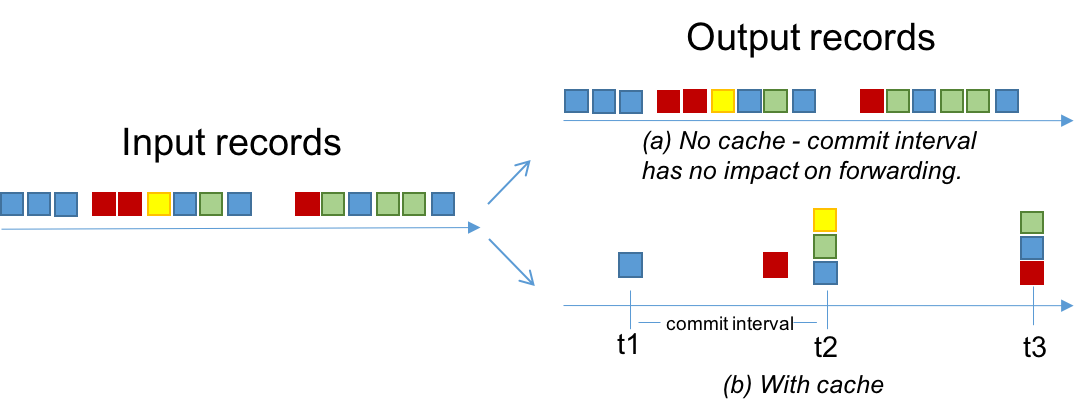

In previous blog posts we introduced Kafka Streams and demonstrated an end-to-end Hello World streaming application that analyzes Wikipedia real-time updates through a combination of Kafka Streams and Kafka Connect. […]

Introducing Kafka Streams: Stream Processing Made Simple

I’m really excited to announce a major new feature in Apache Kafka v0.10: Kafka’s Streams API. The Streams API, available as a Java library that is part of the official […]

How I Learned to Stop Worrying and Love the Schema

The rise in schema-free and document-oriented databases has led some to question the value and necessity of schemas. Schemas, in particular those following the relational model, can seem too restrictive, […]

Real-time full-text search with Luwak and Samza

This is an edited transcript of a talk given by Alan Woodward and Martin Kleppmann at FOSDEM 2015. Traditionally, search works like this: you have a large corpus of documents, […]