New in Confluent Cloud: Making Data & Pipelines Accessible for AI-Ready Streaming | Learn More

Technology

Queues for Apache Kafka® Is Here: Your Guide to Getting Started in Confluent

Confluent announces the General Availability of Queues for Kafka on Confluent Cloud and Confluent Platform with Apache Kafka 4.2. This production-ready feature brings native queue semantics to Kafka through KIP-932, enabling organizations to consolidate streaming and queuing infrastructure while...

AI Tools for Builders — Confluent's MCP Server & Agent Skills

Confluent's AI developer tools are now GA: an open-source local MCP server, a managed MCP server, and Agent Skills. Together they give AI coding assistants direct access to your streaming platform — the tools to act on it and the domain knowledge to build correctly.

New in Confluent Intelligence: Real-Time Context Engine Upgrade, New Model Support, ML Functions, and More

Explore new Confluent Intelligence features: enhanced querying with Real-Time Context Engine, PII detection, sentiment analysis, and support for TimesFM, Anthropic, and Fireworks AI models.

Dawn of Kafka DevOps: Managing Kafka Clusters at Scale with Confluent Control Center

When managing Apache Kafka® clusters at scale, tasks that are simple on small clusters turn into significant burdens. To be fair, a lot of things turn into significant burdens at […]

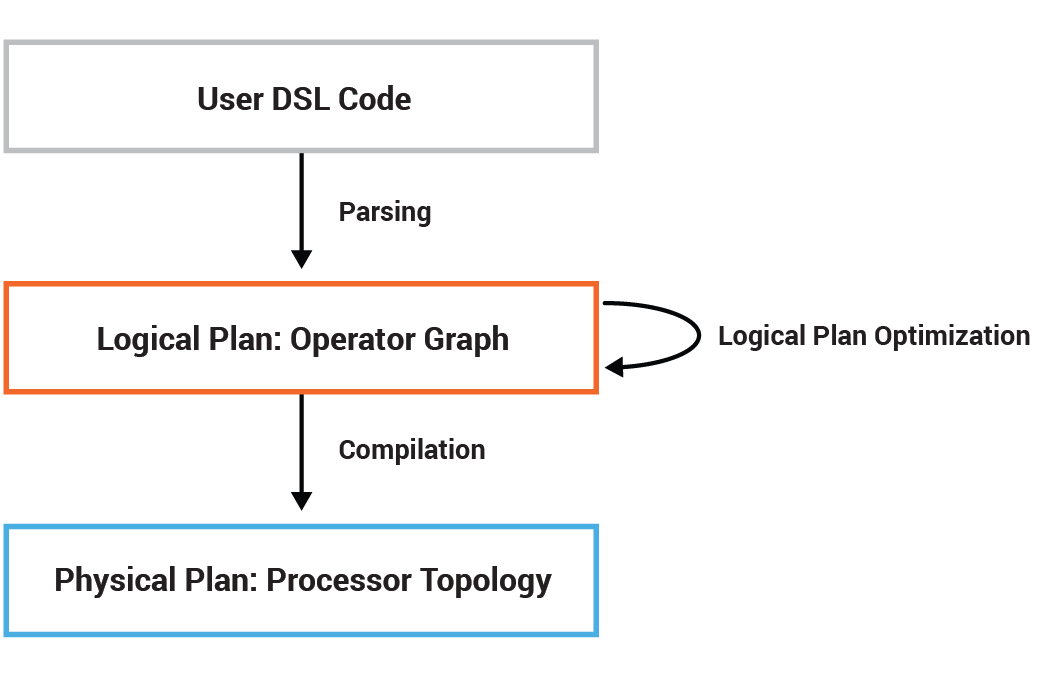

Optimizing Kafka Streams Applications

With the release of Apache Kafka® 2.1.0, Kafka Streams introduced the processor topology optimization framework at the Kafka Streams DSL layer. This framework opens the door for various optimization techniques […]

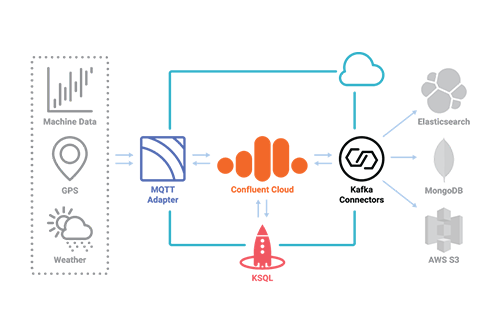

Creating an IoT-Based, Data-Driven Food Value Chain with Confluent Cloud

Industries are oftentimes more complex than we think. For example, the dinner you order at a restaurant or the ingredients you buy (or have delivered) to cook dinner at home […]

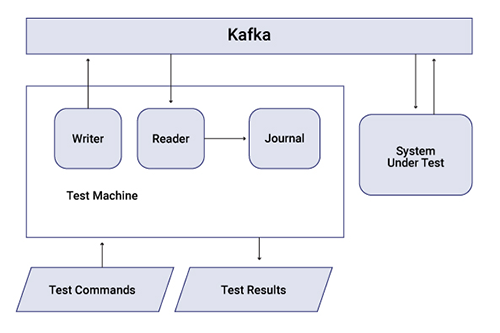

Testing Event-Driven Systems

So you’ve convinced your friends and stakeholders about the benefits of event-driven systems. You have successfully piloted a few services backed by Apache Kafka®, and it is now supporting business-critical […]

12 Programming Languages Walk into a Kafka Cluster…

When it was first created, Apache Kafka® had a client API for just Scala and Java. Since then, the Kafka client API has been developed for many other programming languages […]

Baader’s Journey in Crafting an IoT-Driven Food Value Chain with Data Streaming

While the current hype around the Internet of Things (IoT) focuses on smart “things”—smart homes, smart cars, smart watches—the first known IoT device was a simple Coca-Cola vending machine at […]

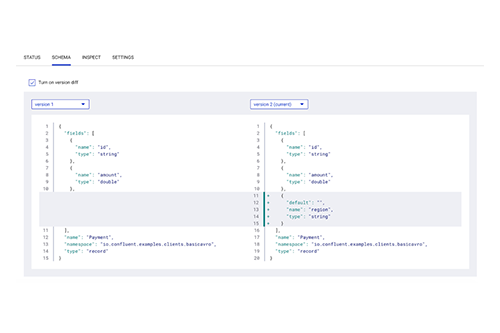

Dawn of Kafka DevOps: Managing and Evolving Schemas with Confluent Control Center

As we announced in Introducing Confluent Platform 5.2, the latest release introduces many new features that enable you to build contextual event-driven applications. In particular, the management and monitoring capabilities […]

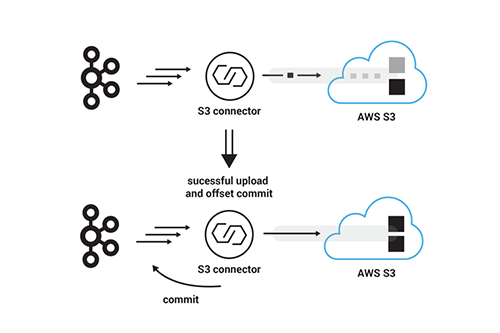

From Apache Kafka to Amazon S3: Exactly Once

At Confluent, we see many of our customers are on AWS, and we’ve noticed that Amazon S3 plays a particularly significant role in AWS-based architectures. Unless a use case actively […]

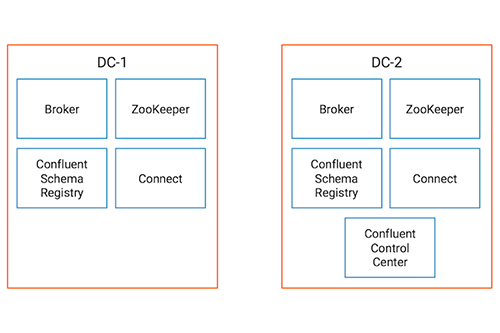

Monitoring Data Replication in Multi-Datacenter Apache Kafka Deployments

Enterprises run modern data systems and services across multiple cloud providers, private clouds and on-prem multi-datacenter deployments. Instead of having many point-to-point connections between sites, the Confluent Platform provides an […]

Announcing Confluent Cloud for Apache Kafka as a Native Service on Google Cloud Platform

I’m excited to announce that we’re partnering with Google Cloud to make Confluent Cloud, our fully managed offering of Apache Kafka®, available as a native offering on Google Cloud Platform […]

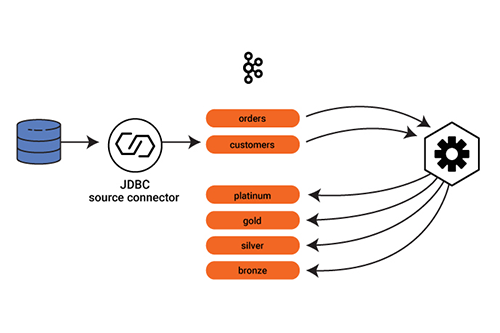

Putting Events in Their Place with Dynamic Routing

Event-driven architecture means just that: It’s all about the events. In a microservices architecture, events drive microservice actions. No event, no shoes, no service. In the most basic scenario, microservices […]

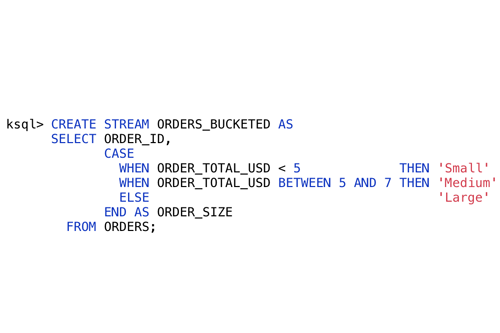

KSQL: What’s New in 5.2

KSQL enables you to write streaming applications expressed purely in SQL. There’s a ton of great new features in 5.2, many of which are a result of requests and support […]

Introducing Confluent Platform 5.2

Includes free forever Confluent Platform on a single Apache Kafka® broker, improved Control Center functionality at scale and hybrid cloud streaming We are very excited to announce the general availability […]

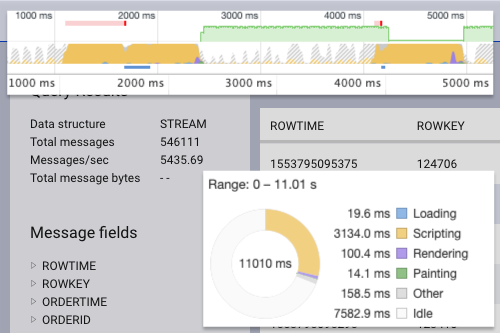

Consuming Messages Out of Apache Kafka in a Browser

Imagine a fire hose that spews out trillions of gallons of water every day, and part of your job is to withstand every drop coming out of it. This is […]