[Virtual Event] Agentic AI Streamposium: Learn to Build Real-Time AI Agents & Apps | Register

USE CASE | SHIFT-LEFT ANALYTICS

Spend Less Time Cleaning, More Time Engineering

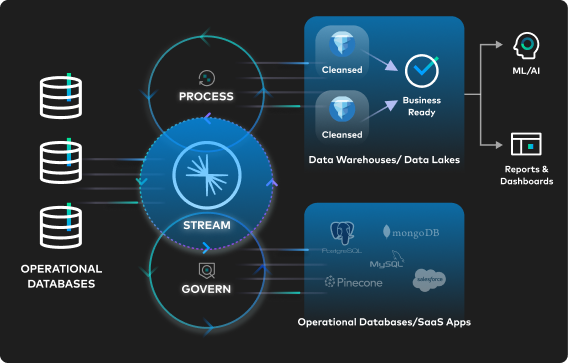

Ready to eliminate wasteful data proliferation and manual break-fix data pipelines? Process and govern data at the source—within milliseconds of its creation—with a data streaming platform.

Shifting processing and governance left allows you to reduce data quality issues by up to 60%, cut compute costs by 30%, and maximize engineering productivity and data warehouse ROI. Explore more resources to learn how to get started or download Shift Left: Unifying Operations and Analytics With Data Products.

Why Shift Processing & Governance Left?

In data integration, “shifting left” refers to an approach where data processing and governance are performed closer to the source of data generation. By cleaning and processing the data earlier in the data lifecycle, you can build data products that give all downstream consumers—including cloud data warehouses, data lakes, and data lakehouses—a single source of well-defined and well-formatted data.

Deliver Reusable, Trustworthy Data Products

Process data and govern data once at the source, and reuse in multiple contexts. Use Apache Flink® to shape data on the fly.

Power Analytics With the Freshest, High-Quality Data

Maintain high-fidelity data that’s continuously flowing into your lakehouse, and evolving seamlessly with Tableflow.

Maximize the ROI of Data Warehouses and Data Lakes

Reduce data quality issues by 40-60% and free up your data engineering team to work on more strategic projects.

“Confluent helped us shift left on our data—giving teams full ownership of their data from source to output. Now it’s clean, validated, and production-ready upstream, reducing rework and accelerating delivery."

"Confluent Tableflow simplifies our data architecture, turning Kafka topics into trusted, analytics-ready tables in just a few clicks. We can now ensure a reliable data foundation for real-time customer insights and AI innovations."

Zazzle implemented Confluentʼs fully-managed Flink offering to transform their largest data pipeline. By shifting stream processing earlier in the pipeline before writing to Google BigQuery, Zazzle reduced storage and computation costs while delivering more relevant product recommendations, directly impacting revenue.

“We have 40,000+ ETL jobs today. It’s chaos…shifting data governance left would be transformational for our organization.”

“[Data cleaning] is a pricey way of pushing it down to the Delta Lake. De-duplication within Confluent is a cheaper way of doing it. We can only do it once.”

“I love the vision of [shift-left analytics]. This is how we would make datasets more discoverable. I knew that Confluent had an integration with Alation but it's awesome to hear that [with Data Portal] you have other ways of enabling those capabilities”

Trending in Shift-Left Analytics

How to Create Reusable Data Products with Shift Left

Watch an in-depth exploration of shift-left analytics and learn how this simple yet powerful approach to data integration is helping companies innovate today.

Show Me How: Shift Left Processing From Data Warehouses

Watch now

How to Optimize Data Ingestion Into Lakehouses & Warehouses

Get the guide

Tableflow Is GA: Unifying Apache Kafka® Topics & Apache Iceberg™️ Tables

Read blog

Conquer Your Data Mess With Universal Data Products

Download ebookAccelerating Your Data Streaming Journey With Our Partners

We work together with our extensive partner ecosystem to make it easy for customers to build, access, discover, and share high-quality data products organization-wide. See how innovative organizations like Notion, Citizens Bank, and DISH Wireless are leveraging the data streaming platform and our native cloud, software, and service integrations to shift data processing and governance left and maximize the value of their data.

Notion Enriches Data Instantly to Power Generative AI Features

Citizens Bank Improves Processing Speeds by 50% for CX & More

DISH Wireless Creates Reusable Data Products for Industry 4.0

Maximize Your Data Warehouses in 4 Steps

Maximize your data warehouses and data lakehouses by feeding them fresh trustworthy data. It all starts when you have a complete data streaming platform that lets you stream, connect, govern, and process your data (and materialize it in open table formats) no matter where it lives.

Learn how Confluent can help your organization shift left and maximize the value of your data warehouse and data lake workloads. Connect with us today to learn how to adopt shift-left architectures and accelerate your analytics and AI use cases.