[Virtual Event] Agentic AI Streamposium: Learn to Build Real-Time AI Agents & Apps | Register

The Program Committee Has Chosen: Kafka Summit NYC 2019 Agenda

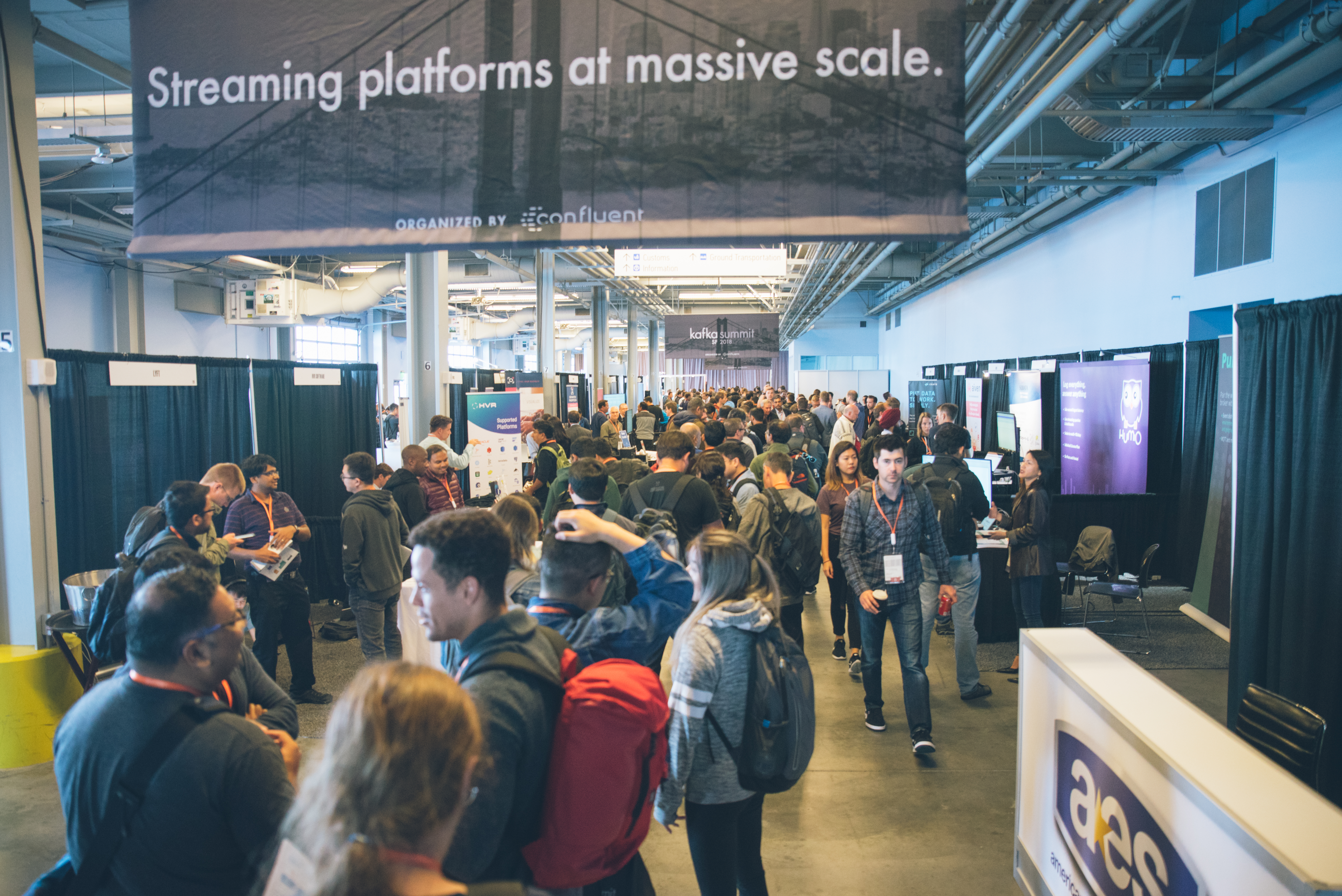

The East Coast called. They told us they wanted another New York Kafka Summit. So there was really only one thing we could do: We’re heading back to New York City in April to hold our first Summit of 2019!

If you were around for the last one, you may remember the keynotes from Ferd Scheepers of ING and our very own Confluent co-founders, and here we are now, just a few months out from Kafka Summit NYC 2019, and we could not be more excited.

Kafka Summit is the place to learn, discuss and bond over all things Apache Kafka and streaming related. I personally encourage you to come. For those of you who have joined us before, you already know how it goes! But read on, because we have changed the track structure to keep up to date with the rapidly evolving streaming landscape.

Keynotes from Pivotal and Confluent

First up, you can look forward to keynotes from Pivotal Senior Vice President James Watters and Confluent CEO and Co-founder Jay Kreps. These will be good.

Over 30 top-notch talks across four tracks

But you know, you can’t get too excited about an event without knowing the agenda. The good news is that the Kafka Summit NYC 2019 agenda is officially live! This was by far the most competitive selection process to date—the number and caliber of submissions keeps rising with each year. The Kafka community doesn’t exactly make it easy on the Program Committee, but this is a good problem to have.

The track definitions are also different this year, so check them out:

1. Core Kafka

Sessions from the Core Kafka track range from deep dives into Kafka’s internals to refreshers on the basics of the platform. The track focuses on the present and future of Kafka as a distributed messaging system, and will help you keep your eye on the leading edge and fundamentals alike as the platform matures. Whether you’re looking to explore deep dives into internals or learn Kafka basics for the first time, this track has you covered.

2. Stream Processing

Talks in this track cover APIs, frameworks, tools and techniques in the Kafka ecosystem used to perform real-time computations over streaming data. If you’re building an event-driven system—whether on KSQL, Kafka Streams or something else—you’ll greatly benefit from the experience shared in this track. It’s perfect if you’re looking for:

- An introduction to KSQL and Kafka Streams

- A deep dive into the details of KSQL and Kafka Streams

- An explanation of how stream processing fits into larger solutions involving Kafka Connect and other components

- Talks on other stream processing frameworks like Spark or Flink

3. Event-Driven Development

Streaming applications are unlike the systems we have built before, and we’re all learning this new style of development. Event-driven thinking requires deep changes to the request/response paradigm that has informed application architectures for decades, and a new approach to state as well. In this track, practitioners will share their own experiences and architectures they have employed to build streaming applications and bring event-driven thinking to the forefront of every developer’s mind. These talks will:

- Share patterns they have developed while building event-driven systems

- Share development techniques, libraries and debugging techniques that have helped them build event-driven systems

- Present big-but-concrete ideas about streaming applications

4. Use Cases

Stream processing and event-driven development are being applied across pretty much every industry there is. The Use Cases track will feature stories of actual streaming platform implementations from a variety of verticals. Talks in this track will define the business problems faced by each organization, tell the story of what part streaming played in the solution and reveal the outcomes realized as a result. Check out this track if you’re interested in:

- Real production use cases

- Creative applications of streaming or events in interesting domains

- Why a streaming architecture was a better fit than other alternatives you considered

- What the system did for the business

What’s to come

To give you a taste of what’s to come at Kafka Summit NYC 2019, here are a few standouts from the agenda:

- Kafka Streams at Scale, Deepak Goyal, Walmart Labs

- Streaming Ingestion of Logging Events at Airbnb, Hao Wang, Airbnb

- Bulletproof Kafka with Fault Tree Analysis, Andrey Falko, Lyft

- Aircraft Predictive Maintenance Pipelines at Singapore Airlines, Victor Wibisono, Singapore Airlines

- From a Million to a Trillion Events Per Day: Stream Processing in Ludicrous Mode, Shrijeet Paliwal, Tesla

- Kafka Connect and KSQL: Useful Weapons in Migrating from a Heterogeneous Legacy System to Kafka Streams, Alex Leung, Bloomberg

- How to Use Kafka and Druid to Tame Your Router Data, Rachel Pedreschi, Imply Data

And there are 25 more awesome talks in addition to these. For the full agenda, visit the Kafka Summit website.

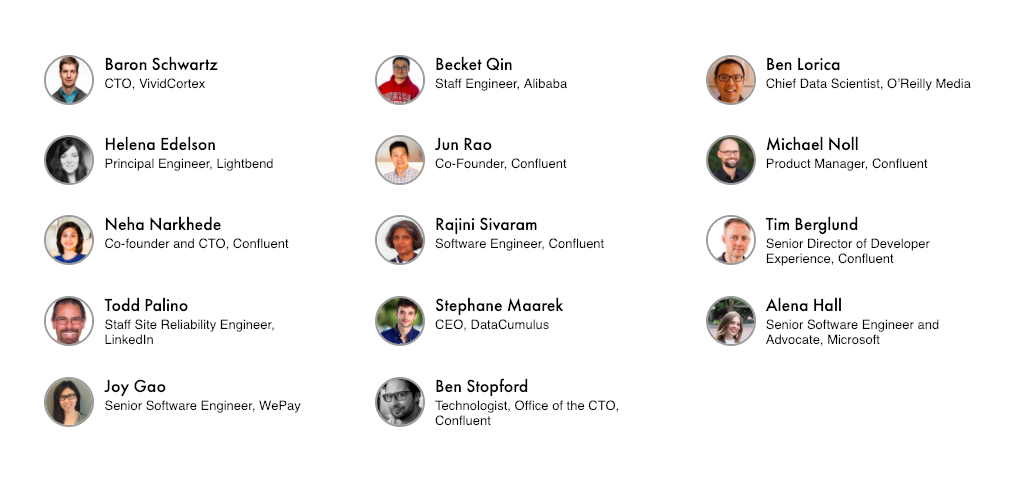

As you can see, this is going to be an amazing event, but as usual, it takes a robust Program Committee to make it happen. These folks have worked hard to review submissions and make the summit a reality. I would like to extend a special thanks to each of these individuals for their contributions:

I hope to see you at Kafka Summit NYC on April 2! If you haven’t already, make sure to take advantage of our early bird special and save $100 by registering by Feb. 8!

Did you like this blog post? Share it now

Subscribe to the Confluent blog

The Current Status of Current

Find out what’s next for Current 2026—including the role of the event in the growing data streaming community and updates to plans for Asia-Pacific and United States events.

Context-Driven AI Reigned Supreme at Current New Orleans

Catch up on Current New Orleans—and how executives, architects, developers, and data engineers alike learned about why AI should be powered by event-driven, context-rich data streams.