Neu in Confluent Cloud: Daten & Pipelines für KI-fähiges Streaming zugänglich machen | Mehr erfahren

Explore Real-Time Data Streaming Fundamentals and Use Cases at Current 2022

Today, 97% of organizations are using data streams to transform how their frontend applications and backend operations adapt to new information in real time.

Companies are using data streams to implement critical capabilities like fraud detection, customer 360, and Internet of Things (IoT) applications – and more than 80% of Fortune 100 companies rely on Apache Kafka® to support these kinds of real-time applications and services.

No matter how your organization plans to use data streaming to transform, there’s no better opportunity to learn what’s next than Current 2022: The Next Generation of Kafka Summit. From Netflix, Snowflake, and Shopify, to Uber, Microsoft Azure, and Nasdaq, hear from industry experts on how Apache Kafka is central to the rise of real-time use cases, and how data streaming is used as a whole. That’s been the impetus behind launching the inaugural Current event – it’s time we had an event dedicated to this ever-growing technology.

On October 4-5, Current 2022 will gather data streaming experts, influencers, and business leaders from the Kafka community and beyond. This two-day event in downtown Austin will allow industry leaders and technologists to learn and connect, accelerating innovation across the data streaming ecosystem.

See how this event can help you and your team discover – and realize – the real-time use cases and modernization your business needs most.

Making Connections Across the Data Streaming Ecosystem

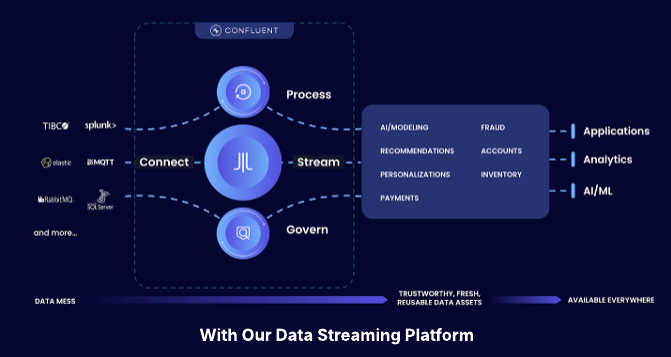

From financial services and tech to manufacturing and retail, organizations are taking advantage of data streaming platforms to implement real-time use cases that deliver information and analytics when and where they’re needed most. But connecting data streams across the whole business isn’t a simple task.

Empowering teams to take advantage of streaming data requires connecting and integrating countless data sources and databases – across dozens of applications, on-premises and cloud environments, and even partner organizations.

In the spirit of making connections, we reached out to three presenters to find out what they’re excited to learn and experience at Current 2022:

- Jay Patel, Software Engineer at Snowflake.

- Sanjana Kaundinya, Senior Software Engineer at Confluent.

- Viktor Gamov, Principal Developer Advocate at Kong Inc.

Jay, Sanjana, and Viktor shared the activities and topics they’re most excited to explore from the event agenda, which includes:

- Keynotes from visionary speakers like Confluent co-founders, Jay Kreps and Jun Rao

- Fundamentals of Apache Kafka training, both mornings

- Breakout sessions, including technical deep dives, lightning talks, and Ask the Experts Q&As

- Networking opportunities at the Meetup Hub and Expo Hall

- Live music and fun activities at the Current 2022 Party on Day 1 and the Data Streaming Celebration to close out Day 2

Register for Current 2022 with code PRMBLG.

4 Ways to Explore Data Streaming at Current 2022

From hyperscalers and data infrastructure vendors to startups and open source communities, innovators across the ecosystem are finding new ways to modernize and transform their organizations with data streaming. And they’re sharing those insights at Current 2022.

Leaders at financial institutions, tech organizations, eCommerce companies, and public sector agencies will discuss how they’re unlocking new value from data. Not only will this allow developers, data scientists, and architects to reimagine existing implementations, but it will also help business leaders discover new opportunities for innovation and growth.

1. Ask Questions Alongside Early Adopters and Technical Experts

There are plenty of opportunities for new and experienced data streaming practitioners to learn the foundations of data streaming at Current. For example, although Jay’s been working on Snowflake’s data ingestion tool, Snowpipe Streaming, for over two years now, he’s still excited to get a refresher on the basics and learn what’s new in the space.

Jay plans to attend one of the morning sessions for Fundamentals of Apache Kafka training. Alongside the advanced technical sessions that he’s looking forward to, he also wants to check out “Streaming 101 Revisited,” a session led by fellow Snowflake engineers revisiting a 2015 article of the same name.

Check out more sessions on the agenda at Current 2022.

2. Learn Best Practices for Modernizing Your Organization

Long-time developer advocate Viktor sees attending an event like Current as one of the best ways to keep up industry best practices, especially as Kafka helps integrate more systems. “The Kafka protocol is kind of the lingua franca of streaming systems,” Viktor said. “Some of the systems might have a different internal architecture, but you can expose them to the Kafka protocol for any application to consume.”

Now that there’s a space to connect in-person and have data streaming conversations broadened beyond Kafka, Viktor anticipates seeing more discussion around how Kafka can help standardize API integration.

While it’s Jay’s first time presenting at an industry event, Sanjana and Viktor have previously presented at Kafka Summit. Both mentioned that they’re interested in seeing what challenges and solutions they learn about in follow-up questions and other presenters’ sessions with more of the data streaming ecosystem represented.

In particular, Sanjana is looking at Current as a way to share her expertise in multi-region replication during her scheduled talk, “To Infinity and Beyond: Extending the Apache Kafka Replication Protocol Across Clusters.” She hopes to gather inspiration from attendees’ feedback, questions, and shared experiences to develop new iterations of Confluent’s Cluster Linking capabilities.

3. Discover the Real-Time Use Cases Transforming Your Industry

The value of streaming data isn’t limited to any particular industry or sector. Companies are looking for ways to integrate data streams with web and mobile applications, eCommerce experiences, and other digital experiences, while also using platforms like Kafka to process and integrate telemetric and IoT device data.

Since Snowflake recently launched Iceberg Tables, Jay shared that engineers at the company have been particularly interested in the Apache Iceberg project, a tool open-sourced by Netflix. “I’m super interested in how change data capture (CDC) and Apache Iceberg work together, of which, Iceberg Tables were recently announced by Snowflake,” Jay said. “So I’m really looking forward to Netflix’s talk.”

He’s also bookmarked sessions from LinkedIn, Robinhood, and Debezium community contributors like Gunnar Morling, hoping to learn about tools, resources, and methodologies that will be relevant to his work on Snowpipe Streaming and Snowflake Connector for Kafka.

Explore the rest of the Current 2022 agenda.

4. Envision the Future of Business, Transformed by Data Streaming

Organizations adopting real-time use cases will have to, as Viktor puts it, “mix and match solutions” to achieve implementations with the necessary performance and portability. That will become especially important as more organizations begin to pursue more advanced use cases.

Jay noted, “Eventually, these applications will have to perform more sophisticated forms of data analysis, like applying machine learning algorithms to extract deeper insights from the data.”

All three presenters are looking forward to hearing from the keynote speakers scheduled to speak at Current. Sanjana’s especially looking forward to Jun Rao’s keynote with the CIO of USPS, while Jay and Viktor are excited to hear what Confluent CEO Jay Kreps will share about the direction of data streaming as a whole, as well as its effect on developer productivity, the adoption of managed services, and the delivery and launch of new products.

Join the Data Streaming Community at Current 2022

IT and engineering leaders in more than 80% of organizations report that real-time data streams are critical to their business. But adopting, modernizing, and maintaining underlying data systems will be an ongoing challenge, especially as organizations look for new ways to maximize the value of their data streams.

“Distributed systems are very complicated – then when you have a globally distributed system, it becomes 10 times more complicated, in my opinion,” Sanjana said. “That’s where I would say a lot of businesses and a lot of customers are trying to go toward.”

At Current 2022, you’ll have the opportunity to hear from technical experts, learn best practices from industry innovators, and find real-world inspiration to guide you, your team, and your organization into the future of data streaming.

Register now – and use the code PRMBLG for 25% off the registration fee

Ist dieser Blog-Beitrag interessant? Jetzt teilen

Confluent-Blog abonnieren

Confluent Cloud for Government Achieves FedRAMP Moderate: Mission-Ready Data Streaming for Federal Agencies

Confluent Cloud for Government has achieved FedRAMP Moderate authorization, allowing federal, state, and local agencies to deploy secure, fully managed data streaming. By eliminating the operational burden of self-managed Kafka, the platform helps agencies break down data silos, modernize legacy...

Confluent Recognized in 2025 Gartner® Magic Quadrant™ for Data Integration Tools

Confluent is recognized in the 2025 Gartner Data Integration Tools MQ. While valued for execution, we are running a different race. Learn how we are defining the data streaming platform category with our Apache Flink® service and Tableflow to power the modern real-time enterprise.