Ahorra un 25 % (o incluso más) en tus costes de Kafka | Acepta el reto del ahorro con Kafka de Confluent

An Overview of Confluent Cloud Security Controls

Whether you are a developer working on a cool new real-time application or an architect formulating the plan to reap the benefits of event streaming for the organisation, the subject of security is an ever-present requirement that is impossible to ignore. Ignoring it will invariably get you into trouble deeper into your project, or even worse, finding security flaws in a released design or a production deployment could lead to devastating consequences. Using software as a service (SaaS) like Confluent Cloud for event streaming has massive benefits, but it does not mean that you can stop caring about security. Running workloads and putting sensitive data for business-critical applications outside of your own environment calls for serious assessment of the service.

To help support your security validation of Confluent Cloud, we recently published a cloud security white paper.

Cloud service security validation aspects

The security validation of a cloud service, the service provider, and their processes become a key part of onboarding cloud services that will carry sensitive or classified data. This process can be, and usually is, quite involved, where questionnaires and documentation need to go back and forth between the parties. This goes on until security teams as well as legal, risk, and compliance teams all agree that the security controls and risks are within the organisation’s level of acceptance.

At Confluent, we see it as best practise to involve your security teams as early as possible in the projects we participate in. Experience shows that at large enterprises or governmental organisations, these validation processes can sometimes take months to complete.

If you are eager to kick-start your projects, this can be a frustrating wait, but you will benefit from understanding what security mechanisms to consider when it comes to authentication, authorization, and encryption, for example.

If you are an architect looking to move event streaming workloads to a cloud service, you will face questions on how to comply with security policies as well as networking requirements. Networking and security requirements intersect in various ways when using the cloud, and this is especially true in hybrid environments where on-premises legacy systems need to be integrated into the cloud.

Security requirements might differ according to the use case as well. Use cases involving sensitive data like personally identifiable information (PII), personal health information (PHI), or financial information can have a type classification dictating how that data can be accessed, how and where it can be stored, and if it needs to be encrypted or not.

Guiding principles

Confluent’s security philosophy centers around layered security controls designed to protect and secure your data in Confluent Cloud. In addition to organisational measures, we believe in multiple logical and physical security control layers, including access management, least privilege access, strong authentication, logging and monitoring, vulnerability management, and bug bounty programs.

Cloud providers and customer security controls

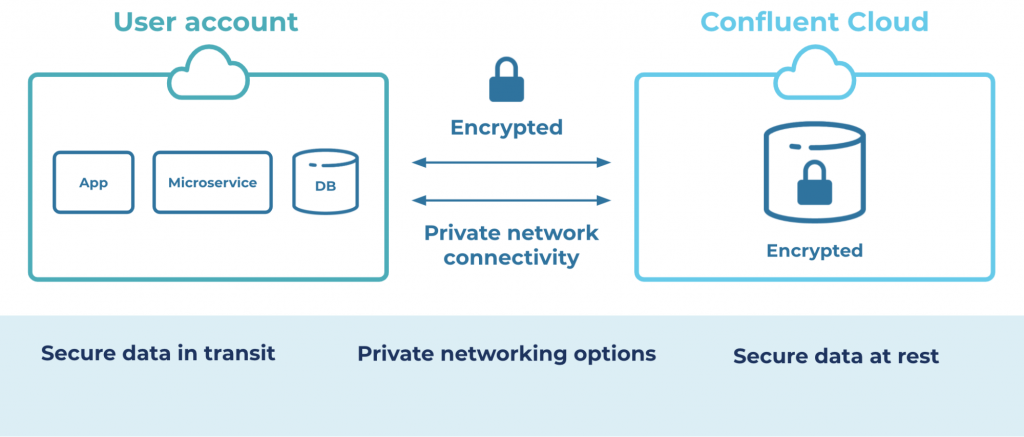

Confluent Cloud runs on cloud provider infrastructure and services, meaning that there are dependencies and inherited capabilities that are important to understand. Here, we talk about things like compliance certifications, physical access to datacenters, and private networking options. Understanding and trusting the security mechanisms in the underlying compute environment means that the application, in our case Confluent Cloud, stands on a solid foundation.

As Confluent Cloud builds on open source Apache Kafka®, there are inherited security capabilities like wire encryption (TLS), authentication, and access control lists (ACLs). Additionally, Confluent keeps adding security functions like single sign-on (SSO), bring your own key (BYOK) encryption, as well as the upcoming Role-Based Access Control (RBAC) and audit logging that will further help users comply with internal and external regulations and policies.

The old saying that “people are the weakest link in any security implementation” is still valid for the cloud, so understanding what processes and checks are wrapped around the people becomes paramount. The folks operating Confluent Cloud, our site reliability engineers (SREs), are subject not only to background checks as part of hiring but they also operate under our internal security protocols and processes. For example, there are controls in place to ensure that they securely access production environments, and there are approval processes for actions that affect users environments or data. The Confluent Cloud admins are a small and tightly governed team that lives and breathes security as part of their day-to-day job.

Keep calm and carry on

I hope this short introduction provides an idea of the various security topics involved in a security validation process. At Confluent, we believe in transparency when it comes to security. This is true for our internal processes, security controls, and the security controls that we provide to users. To learn more, download the "Building Trust with Confluent Cloud" white paper below:

The white paper gives an extensive overview of the security controls of Confluent Cloud. It has been published in an effort to save time for anyone involved in the validation process of onboarding Confluent Cloud, and it is a good read for anyone using Confluent Cloud, providing important insights for security architects, engineers, and compliance teams working to onboard their organisation to Confluent Cloud.

¿Te ha gustado esta publicación? Compártela ahora

Suscríbete al blog de Confluent

Customer Intelligence Hub: A Single Pane of Glass for Customer Insight and Action

Customer Intelligence Hub unifies customer signals into a real-time, AI-powered view that helps GTM teams prioritize risk, identify opportunity, and act faster with contextual insights.