[Virtual Event] Agentic AI Streamposium: Learn to Build Real-Time AI Agents & Apps | Register

How to Break Off Your First Microservice

The road from monolithic architecture to cloud-native, microservices application is rarely a straightforward engineering exercise. There's often a significant gap between understanding the theoretical benefits of microservices and successfully extracting each service from a mature, long-running codebase.

Many teams exploring microservices migration struggle most with the first extraction. How do you make that initial step concrete, low-risk, and reversible? It’s easy to be overwhelmed by envisioning the end state. The outcome—numerous independently deployable services owned by autonomous teams—should be reached incrementally, not through a single large transformation.

This step-by-step guide focuses on breaking off your first microservice as part of a safe, incremental migration from monoliths to microservices. It’s designed for teams early in this transition who want to start without destabilizing production. Let’s get started.

Why the First Microservice Is the Hardest

In any microservices migration, extracting services from all their dependencies and point-to-point integrations carries the most risk. If you feel hesitant about decomposing your application, that hesitation is justified. The first service extraction is uniquely challenging because you have to examine years of accumulated technical debt and unresolved organizational decisions at the same time.

That’s why the objective for the first service extraction should not focus on achieving immediate scalability or to redefine organizational practices but to validate a narrow capability. Instead, it’s about identifying a discrete unit of functionality that can be isolated, deployed independently, and integrated with the existing system without rewriting the entire system or introducing instability.

Fear of Breaking the Monolith: Technical Change vs. Organizational Change

In a monolithic architecture, ownership boundaries are often implicit or poorly defined. Dependencies are deeply embedded and not always visible until change is attempted. Mature monoliths tend to have hidden data coupling and behavioral dependencies that are far more complex in practice than in design documentation.

Introducing a distributed component also increases operational complexity for teams that may already be capacity-constrained. The first microservice also requires you to introduce independent deployment, monitoring, and incident response.

So extracting a service isn’t just an exercise in technical refactoring because it requires redefining system boundaries that directly affect team responsibilities, communication patterns, data ownership, and on-call expectations.

Acknowledging that risk and deliberately reducing it as you break a monolithic application into independent services is the key to mapping out a migration plan that is sustainable for your team and minimizes risk to the business.

Signs You’re Ready to Break Off a Microservice

You do not need hyperscale traffic or exceptional engineering maturity to justify extracting your first microservice. Rather than waiting to satisfy a theoretical checklist, look for tangible friction in your current system that indicates the monolith is actively constraining delivery.

Technical Readiness and Signals

When considering breaking up your monolithic app, look for areas where the existing architecture is demonstrably slowing progress:

Change amplification: Is there a module where even minor modifications require full-system regression testing or coordinated releases, increasing risk and cycle time?

Stable domain logic: Is there a portion of the codebase with well-understood business rules that change infrequently, making its behavior predictable and suitable for isolation?

Clear domain boundaries: Are there identifiable seams where a cohesive set of responsibilities can be clearly defined and described as performing a single, focused function?

Team and Operational Readiness

Before you get started, your team should be prepared to own the service throughout its lifecycle. That means defining:

Explicit ownership responsibilities: Is there a clearly identified team—or at minimum a small, accountable group—willing to assume end-to-end responsibility for the service, including on-call support and production health?

Baseline automation: Do you have sufficient CI/CD automation in place to deploy and operate an additional artifact without destabilizing existing release processes?

How to Plan an Incremental Migration to Microservices

An incremental microservices migration strategy is a low-risk approach you can take that prioritizes small, reversible changes. If you deliberately constrain the scope of work so that it’s small enough to be fully understood by a single engineer and reversible if outcomes are unsatisfactory, you can reliably limit the risks and potential consequences if failures occur.

Incremental Change, Not Large-Scale Rewrites

Avoid “big bang” modernization efforts, which often lead to feature freezes when something inevitably goes wrong and fails production requirements. Instead, you can incrementally extract services starting with ones at the system’s boundaries.

This approach allows the core application to continue delivering business value throughout the migration.

Big Bang vs. Incremental Migrations: Trading Faster Timelines With Higher Risk for Gradual Architectural Overhauls

Learning Before Scaling

Your primary goal shouldn’t be short-term performance or scalability improvements but instead learning and documenting your approach to breaking off and owning a service end-to-end. By introducing a small, isolated service, your team can gain firsthand experience with the operational realities of distributed systems—such as network latency, consistency trade-offs, and deployment automation—within a controlled environment.

Ultimately, the microservices architecture you’re working toward won’t be scaled or managed effectively if your organization hasn’t learned how to reliably operate more than one service in production.

Choosing the Right Candidate for Your First Microservice

Choosing the right first service is critical in any microservices migration. Selecting an inappropriate first service is one of the fastest ways to derail a software modernization effort.

While you might assume that targeting the most complex or problematic part of the system offers the greatest immediate payoff, that's often counterproductive. The first microservice you extract should be chosen to maximize learning while minimizing systemic risk.

Remember: a good first microservice is small, already loosely coupled, and non-critical. So if something does go wrong, you can learn from it instead of derailing your entire initiative.

Evaluation Criteria for Low vs. High-Risk Services to Decouple

| Good First Service (Low Risk) | Risky First Service (High Risk) |

Business Value | Peripheral/support | Core/critical path |

Dependencies | Few to none (loose) | Many/complex (tight) |

Data Coupling | Owns its data (low-shared) | High-shared database access |

Failure Impact | Minimal/non-critical | Catastrophic/system-wide |

Examples | Email service, PDF generator, reporting | Payment processing, checkout, authentication/identity |

Characteristics of a Good First Microservice

An effective first candidate is typically peripheral to core business workflows. Its failure should not interrupt primary revenue-generating transactions or compromise system integrity.

Strong candidates generally exhibit the following properties:

Low dependency footprint: The functionality interacts with a limited number of components in the monolith and does not require deep call chains across the system.

Low data coupling: The logic does not depend on complex joins across many shared tables or on implicit database-level behaviors.

Non-critical execution path: The system remains usable if the service experiences temporary unavailability or degraded performance.

Common examples include email or notification delivery, media processing (such as image resizing), or document generation utilities.

What to Avoid Initially

The initial extraction should not target the architectural core of the application, so avoid candidates that involve:

Core transactional workflows: Components such as shopping carts, order processing, or payment handling carry high correctness and availability requirements that make them unsuitable for an initial experiment.

Tightly shared persistence: Code that relies heavily on shared schemas, database triggers, or stored procedures indicates deep coupling that will significantly increase the cost and risk of a first extraction.

How to Decouple Services Without Breaking Everything

In a monolith to microservices transition, decoupling must begin within the existing codebase before introducing new infrastructure or network-level integration.

Step 1. Separate Behavior Before Separating Infrastructure

The safest way to break off your first microservice is to enforce boundaries inside the monolith before extracting it.. Refactor the target functionality into a well-defined module that behaves as if it were already a remote service. Establish a strict public interface and ensure that all interactions occur exclusively through that interface.

No other component should access the module’s internal logic or persistence directly. Enforcing these constraints at the code level exposes hidden dependencies and implicit coupling while failures are still local and easy to diagnose—before network latency, partial failures, and retries complicate the system.

Transitioning Away From a Tightly Coupled Monolith Starts With Decoupling Services Within the Existing Codebase

Step 2. Define Contracts and Interfaces

Once the module boundary of your first microservice is stable, you’ll need to define the integration seam that will exist after extraction. In other words: how the monolith will communicate with the service when it runs out-of-process.

Make sure to specify a clear, explicit, and versioned contract (e.g., REST or gRPC) that formalizes request and response semantics and supports independent evolution.

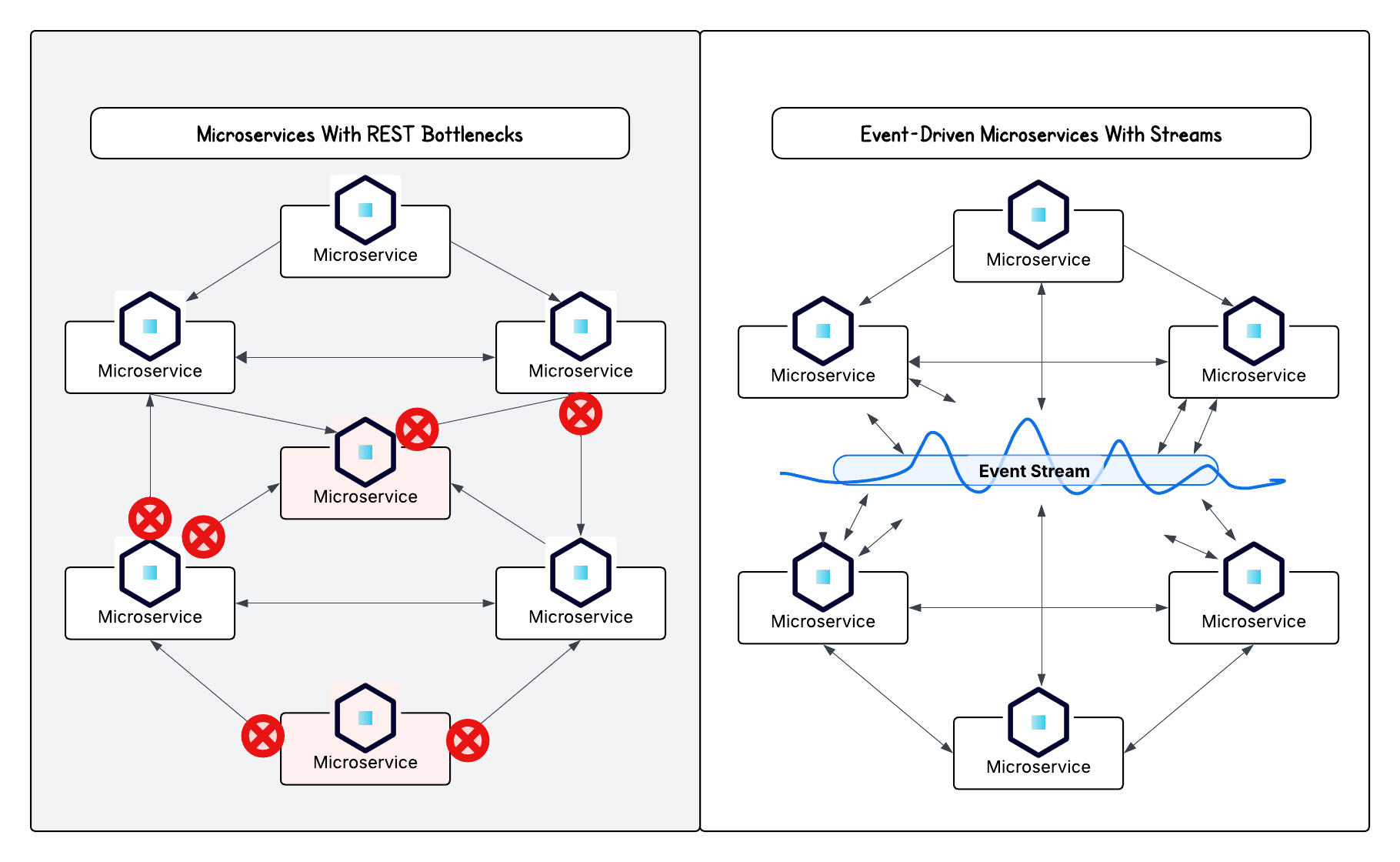

Where possible, consider an event-driven approach to reduce temporal coupling and limit the blast radius of failures. So instead of invoking the service synchronously, the monolith can publish domain events that the service consumes asynchronously.

Apache Kafka® is a popular event streaming engine to use for microservice orchestration and interservice communication because of its capabilities in handling high throughput workloads at scale, with low latency and high availability. But it’s also ideal for decoupling data systems, a key part of the shift from a few event-driven microservices to an event-driven architecture.

Data: The Hardest Part of Decoupling the First Microservice

Data separation is the hardest phase of any microservices migration. Shared databases are the most common source of hidden coupling in monolithic architectures, often acting as the primary force binding a monolith together by creating coupling that is not immediately visible in the application layer.

Shared Databases and Hidden Coupling

In a fully realized microservices architecture, the preferred model is one database per service. For an initial extraction, however, adopting this pattern immediately may introduce excessive risk. As a transitional state, it’s acceptable for a newly extracted service to connect to the monolith’s database, provided it interacts exclusively with the tables for which it is the logical owner.

Careful scrutinize how shared databases impact services within your application. Foreign key constraints, cross-schema joins, database triggers, and shared stored procedures frequently encode hidden dependencies that undermine service isolation. You must explicitly identify and understand all database-level interactions before any service is moved out of process.

Managing Data Ownership Over Time

The long-term objective is unambiguous data ownership. Even if data remains physically co-located in a shared database initially, the new service should be the sole writer to its tables. Other parts of the system must access that data through the service’s API rather than through direct database reads or writes.

Trade-offs are inevitable during this transition. You may have to implement temporary mechanisms such as data synchronization, replication, or controlled dual writes for now. Ultimately, a constrained, imperfect intermediate state is preferable to stalling your migration timeline in pursuit of architectural purity.

Deployment and Operational Considerations for Your First Microservice

Extracting code is only part of the transition. Once functionality runs out of process, it must be deployed, monitored, and operated as an independent system. Even in an incremental microservices migration, operational overhead increases slightly with each new service as a distributed architecture introduces requirements that do not exist in a single-application model.

Running One Service Differently Than the Rest of the Monolith

A clear deployment strategy is required for the new service. Decide whether it will share the monolith’s delivery pipeline or use a separate one, and ensure that choice does not increase coordination risk.

Key considerations include:

Failure isolation: If the service becomes unavailable or responds slowly, the monolith must degrade gracefully. Timeouts, retries with backoff, and circuit breakers should be in place to prevent cascading failures.

Rollback capability: Maintain an explicit escape hatch. The monolith should be able to immediately revert to its original in-process implementation—via a feature flag or configuration switch—if the service exhibits unexpected behavior.

Observability and Rollback Readiness

Operating multiple services requires visibility beyond local logs. Diagnosing failures now depends on understanding interactions across process boundaries.

At a minimum, operational readiness should include:

Centralized logging: Logs from both the monolith and the service must be collected and correlated to trace requests end-to-end.

Health checks: The service should expose a health endpoint that reflects its ability to handle requests, not merely that the process is running.

Baseline metrics: Monitor latency, error rates, and throughput on the interaction between the monolith and the service to detect regressions early.

Measuring Success (and Learning)

You’ll know that breaking off your first microservice has been a success when you have a deep understanding of three things: the structural changes required, the operational responsibilities involved, and clear next steps in breaking off your second service and beyond.

What Early Success Looks Like

For an initial extraction, define success based on structural and operational outcomes rather than performance metrics:

Reduced coupling: Was a clear and enforceable boundary established between the monolith and the service, with dependencies flowing through explicit contracts?

Independent delivery (over time): Can the team responsible for the service deploy changes without coordinating with the monolith’s release cycle?

Operational understanding: Does the team now have firsthand experience with distributed-system realities such as latency, failure modes, and service-level metrics?

Feedback Loops for the Next Step

Treat the first extraction as an experiment. Conduct frequent retrospectives and document findings. Identify what proved more difficult than expected: network latency, data ownership, operational overhead, or deployment friction.

These insights will directly inform the approach and sequencing of subsequent service extractions.

Common Mistakes to Avoid When Migrating to Microservices

Well-intentioned patterns can undermine the effort if left unchecked. Here’s how to avoid overscoping early service extractions or undermining your overall approach to microservices.

Overscoping the First (or Second) Service

The most common failure mode is attempting to fix too much at once. The temptation to refactor business logic, upgrade dependencies, and redesign data models during extraction might be strong, especially if your first migration extraction is a success.

But if multiple axes of change are introduced simultaneously, you’re increasing your risks while decreasing your ability to learn from mistakes safely. The scope of your first several services should remain narrowly focused on separation, not optimization.

Treating Microservices as a Checkbox

Extracting one service or even multiple does not mean your organization “has microservices.” It is not a milestone to be declared and forgotten. Microservices are an architectural approach that requires sustained ownership, operational discipline, and ongoing investment. Without that commitment, the extracted service will quickly become yet another monolith.

What Comes After the First Microservice

A monolith to microservices journey does not require continuous extraction.

Once the service is running stably in production, pause deliberately. Completing the first extraction is a meaningful achievement.

From One Service to a Strategy

You now have empirical data rather than assumptions, including the real cost of data separation, the operational burden introduced, and the impact on team workflows. Use this evidence to evaluate whether additional services should be extracted and, if so, which candidates are most appropriate.

Deciding Whether to Continue

Importantly, continued migration is optional. After reviewing the outcomes, you may conclude that the operational complexity outweighs the benefits for your product and team. That decision still represents success. You explored an architectural path, validated it against reality, and made an informed choice—rather than proceeding on faith or trend-driven momentum.

Ready to get started? If you want to move away from monoliths, try extracting your first microservice using serverless Kafka, free on Confluent Cloud.

Monolith to Microservice FAQs

Do you need microservices to scale? No. No, you do not need microservices to scale. Many large systems run successfully as monoliths for years.

How many microservices should you start with? Exactly one. Master the art of managing two distinct applications (the monolith and your new service) before you even consider adding a third.

Can you stop after just one? Absolutely. If you extract one service and find that the added complexity isn't worth the benefit, stop. A hybrid architecture of a monolith plus a few sidecar services is a very common and effective pattern.

What if the first microservice fails? If you chose a non-critical candidate and built in a rollback mechanism, a failure is just a learning opportunity. Revert traffic back to the monolith's internal path, conduct a blameless post-mortem, and try again later with better knowledge.

Apache®, Apache Kafka®, and Kafka® are registered trademarks of the Apache Software Foundation. No endorsement by the Apache Software Foundation is implied by the use of these marks.

Avez-vous aimé cet article de blog ? Partagez-le !

Abonnez-vous au blog Confluent

Do Microservices Need Event-Driven Architectures?

Discover why microservices architectures thrive with event-driven design and how streaming powers applications that are agile, resilient, and responsive in real time.

How to Future-Proof Architectures With Continuous Availability Via Hybrid & Multicloud

Learn how to design future-proof architectures for hybrid and multicloud environments, balancing portability, resilience, and long-term flexibility and using Kafka to implement continuous availability.