[Webinar] How to Implement Data Contracts: A Shift Left to First-Class Data Products | Register Now

Find a Partner

Making Your Data Streaming Use Cases a Reality

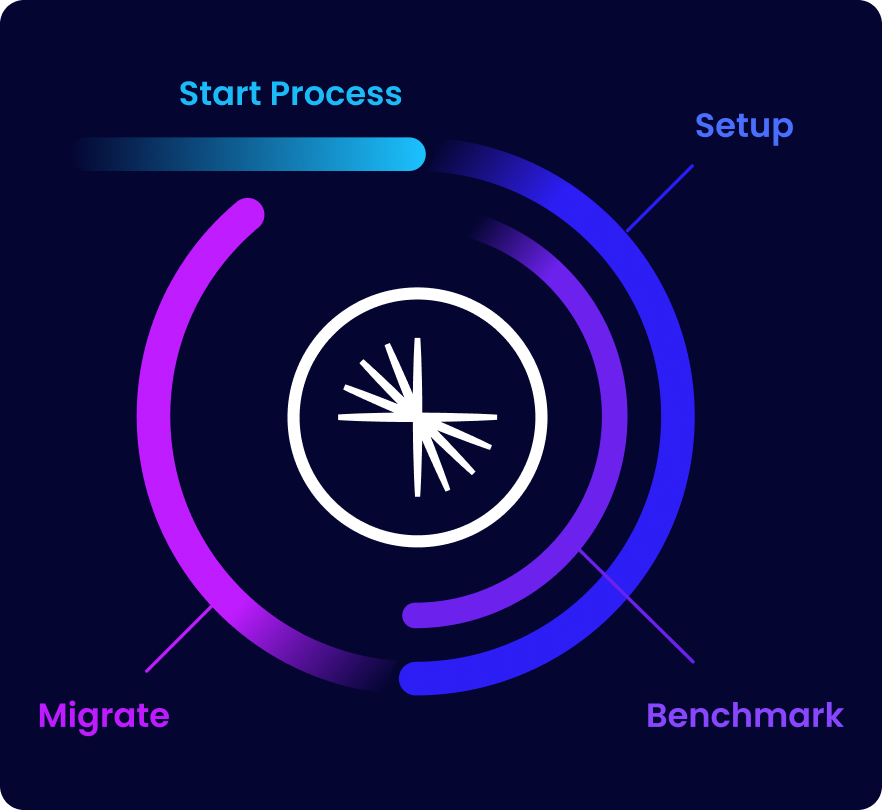

Confluent has an extensive partner network that empowers organizations of all sizes. Explore partner resources and see how Confluent and our partners help you launch new use cases, migrate legacy systems, and transform your organization.

Migrate to Confluent With Ease

Confidently migrate from Apache Kafka® or a Kafka service to Confluent and modernize your legacy systems. Partners like Improving and our cloud service providers deliver tailored migration offerings that streamline your transition from any version of Kafka or traditional messaging systems to Confluent.

Unlock New Use Cases, Faster

Confluent’s global and regional system integrators and cloud partners ensure you have end-to-end support and resources to serve customers and streamlining operations in real time. See how this ecosystem of technology experts can deliver the solutions and managed services you need to break down data silos and accelerate innovation with Confluent.

Integrate Your Data, Wherever It Lives

Ready to integrate and use data across multiple data sources and systems in real time? Use one of our 120+ pre-built Kafka connectors in your cloud or on-premises deployments. Or take advantage of our native integrations with partner products like Amazon Lambda, ClickHouse, Couchbase, Decodable, Redis, or SAP Datasphere.

Looking to Become a Partner?

Confluent offers a number of programs that help Partners accelerate value for their customers.