Kafka in the Cloud: Why it’s 10x better with Confluent | Find out more

Technology Blog

Data Products, Data Contracts, and Change Data Capture

Change data capture is a popular method to connect database tables to data streams, but it comes with drawbacks. The next evolution of the CDC pattern, first-class data products, provide resilient pipelines that support both real-time and batch processing while isolating upstream systems...

Exploring Apache Flink 1.19: Features, Improvements, and More

Check out all the highlights from the Apache Flink® 1.19 release!

Introducing Apache Kafka 3.7

Apache Kafka 3.7 introduces updates to the Consumer rebalance protocol, an official Apache Kafka Docker image, JBOD support in Kraft-based clusters, and more!

Taking KSQL for a Spin Using Real-time Device Data

We are pleased to invite Tom Underhill to join us as a guest blogger. Tom is Head of R&D at Rittman Mead, a data and analytics company who specialise in […]

Apache Kafka Goes 1.0

It has been seven years since we first set out to create the distributed streaming platform we know now as Apache Kafka®. Born initially as a highly scalable messaging system, […]

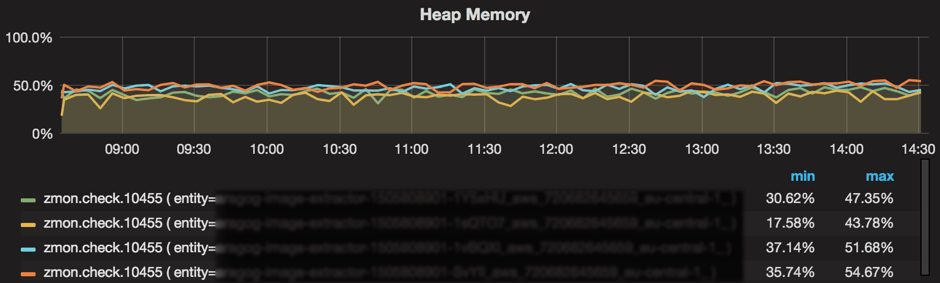

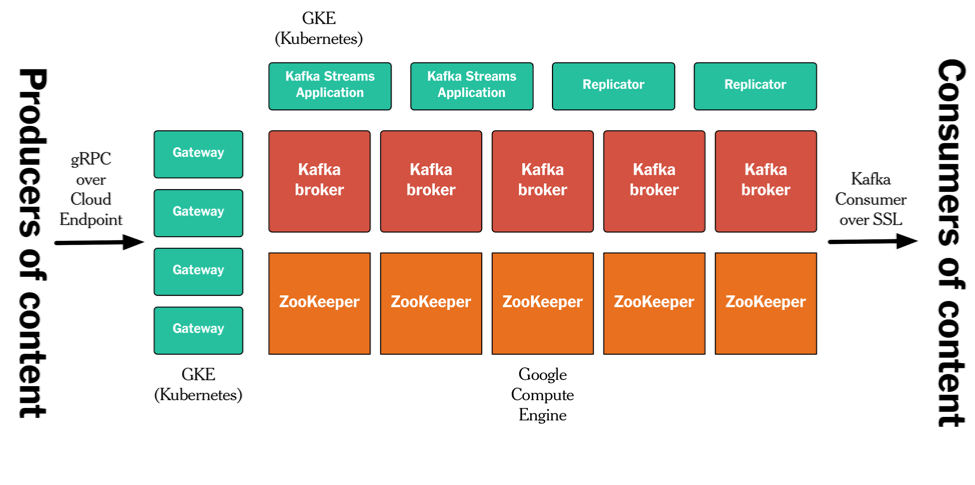

Running Kafka Streams Applications in AWS

This guest blog post is the second in a series about the use of Apache Kafka’s Streams API by Zalando, Europe’s largest online fashion retailer. See Ranking Websites in Real-time […]

Ranking Websites in Real-time with Apache Kafka’s Streams API

This article is by Hunter Kelly, Technical Architect at Zalando. Hunter enjoys using technology, and in particular machine learning, to solve difficult problems. He’s a graduate of the University of […]

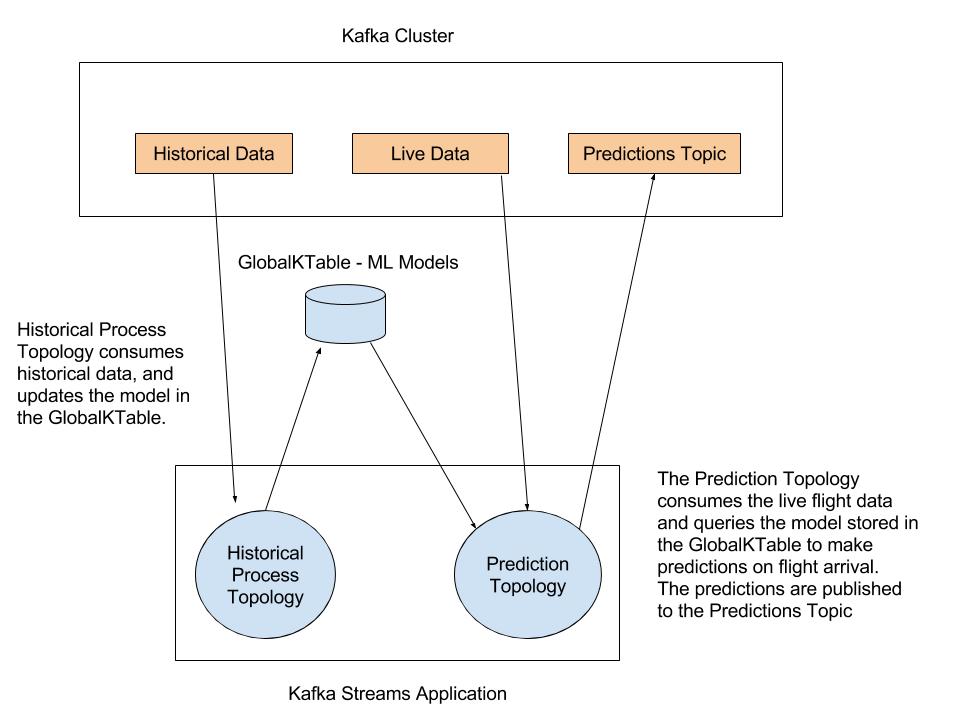

Predicting Flight Arrivals with the Apache Kafka Streams API

Kafka Streams makes it easy to write scalable, fault-tolerant, and real-time production apps and microservices. This post builds upon a previous post that covered scalable machine learning with Apache Kafka, […]

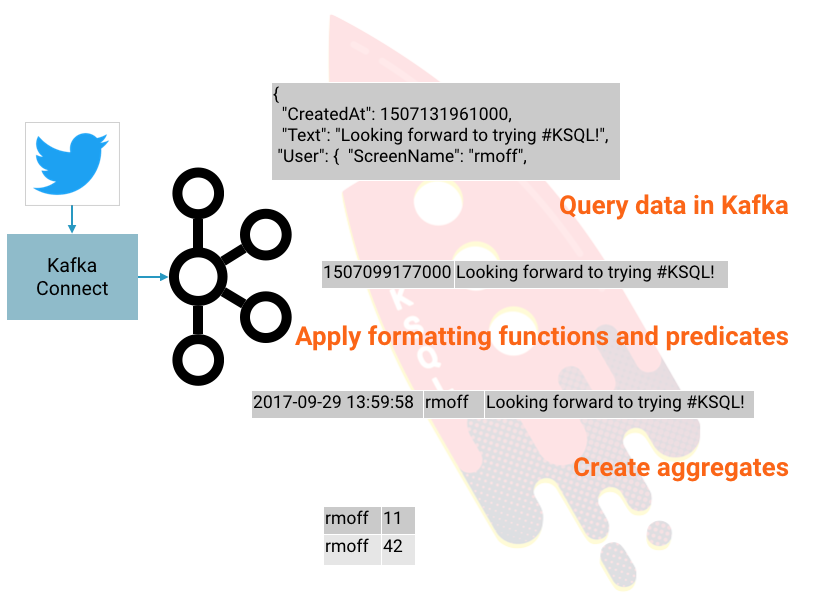

Getting Started Analyzing Twitter Data in Apache Kafka through KSQL

KSQL is the streaming SQL engine for Apache Kafka®. It lets you do sophisticated stream processing on Kafka topics, easily, using a simple and interactive SQL interface. In this short […]

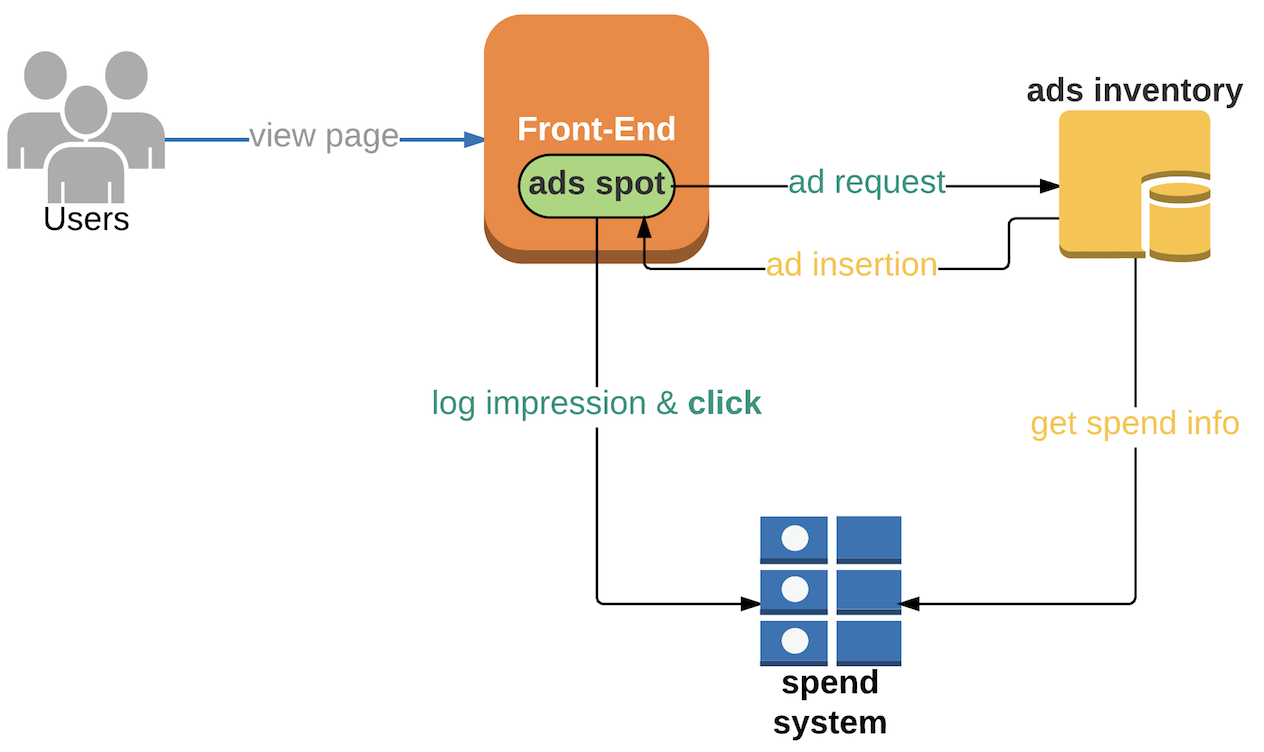

Using Kafka Streams API for Predictive Budgeting

At Pinterest, we use Kafka Streams API to provide inflight spend data to thousands of ads servers in mere seconds. Our ads engineering team works hard to ensure we’re providing […]

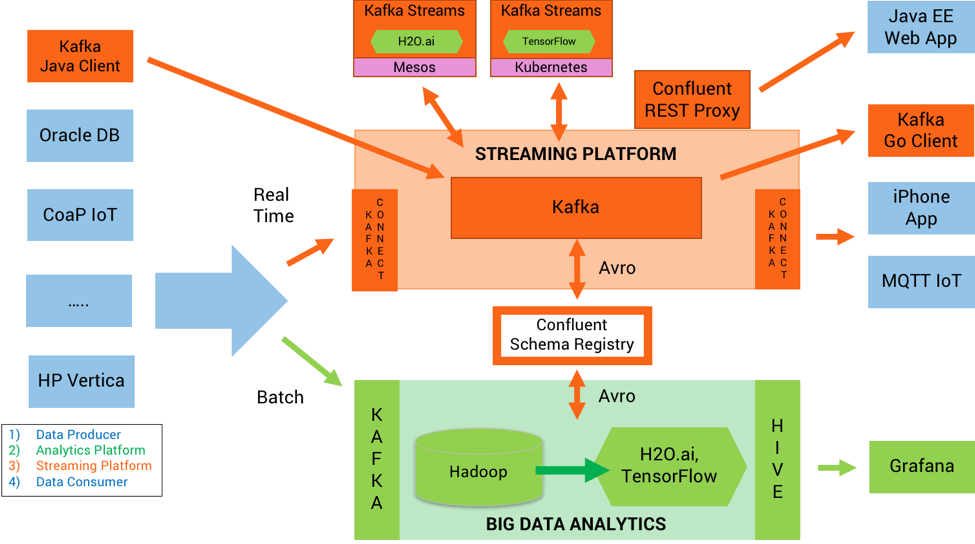

How to Build and Deploy Scalable Machine Learning in Production with Apache Kafka

Scalable Machine Learning in Production with Apache Kafka® Intelligent real time applications are a game changer in any industry. Machine learning and its sub-topic, deep learning, are gaining momentum because […]

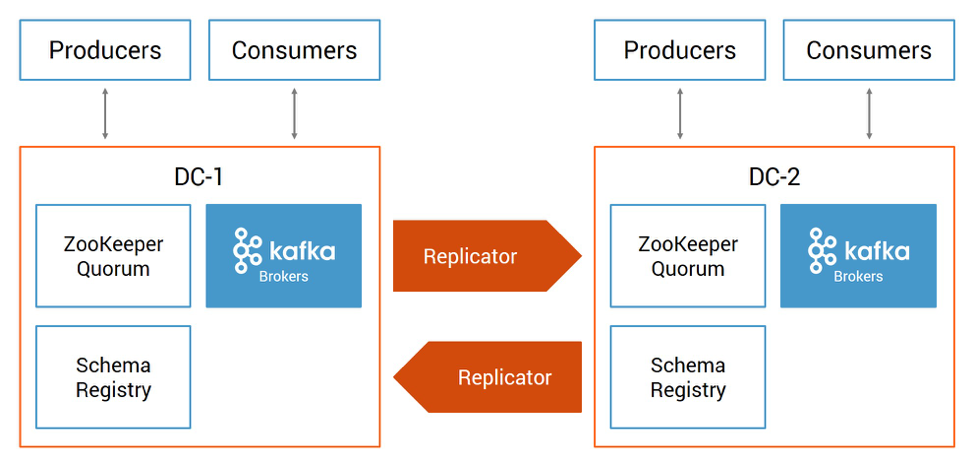

Disaster Recovery for Multi-Datacenter Apache Kafka Deployments

Datacenter downtime and data loss can result in businesses losing a vast amount of revenue or entirely halting operations. To minimize the downtime and data loss resulting from a disaster, […]

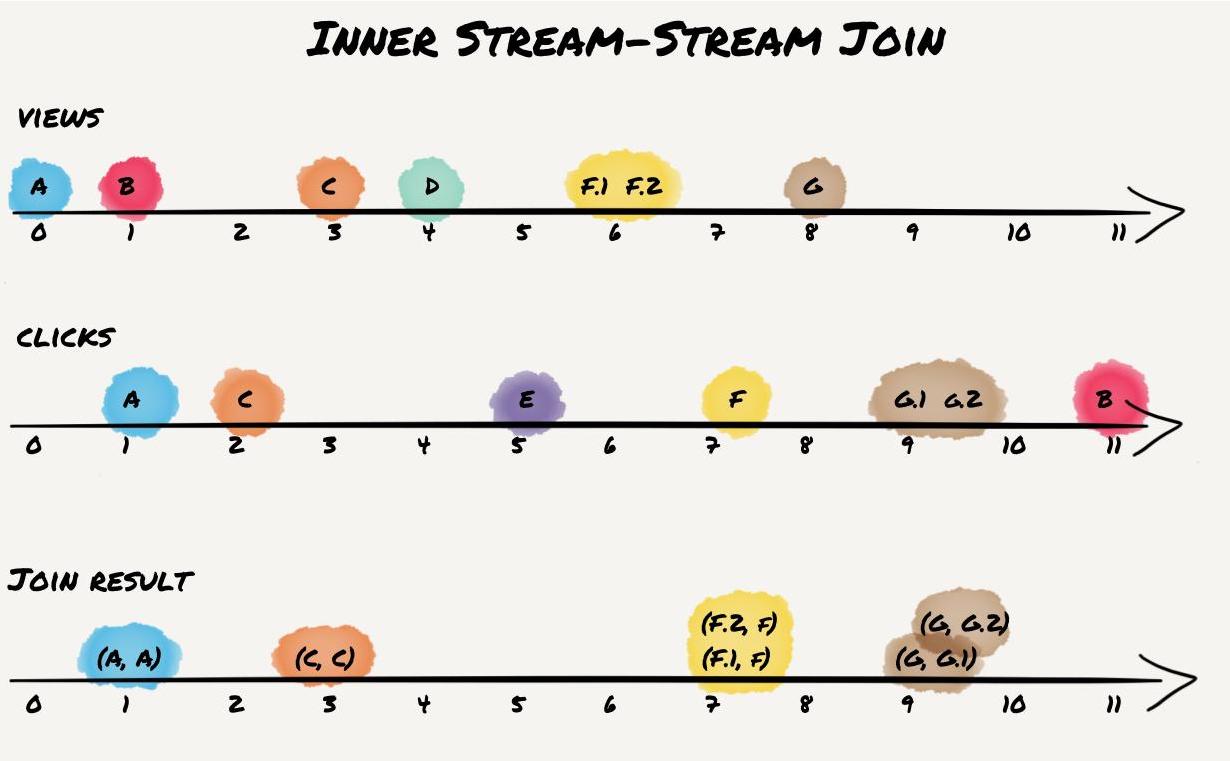

Crossing the Streams – Joins in Apache Kafka

This post was originally published at the Codecentric blog with a focus on “old” join semantics in Apache Kafka versions 0.10.0 and 0.10.1. Version 0.10.0 of the popular distributed streaming […]

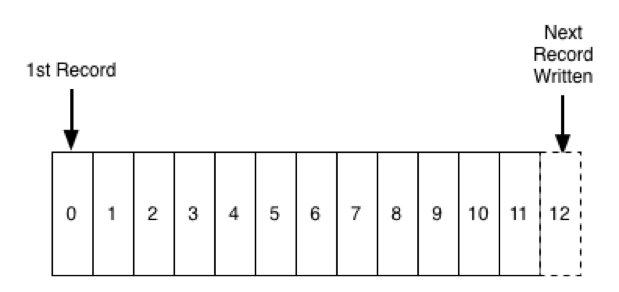

It's Okay To Store Data In Kafka

A question people often ask about Apache Kafka® is whether it is okay to use it for longer term storage. Kafka, as you might know, stores a log of records, […]

How Apache Kafka is Tested

Introduction Apache Kafka® is used in thousands of companies, including some of the most demanding, large scale, and critical systems in the world. Its largest users run Kafka across thousands […]

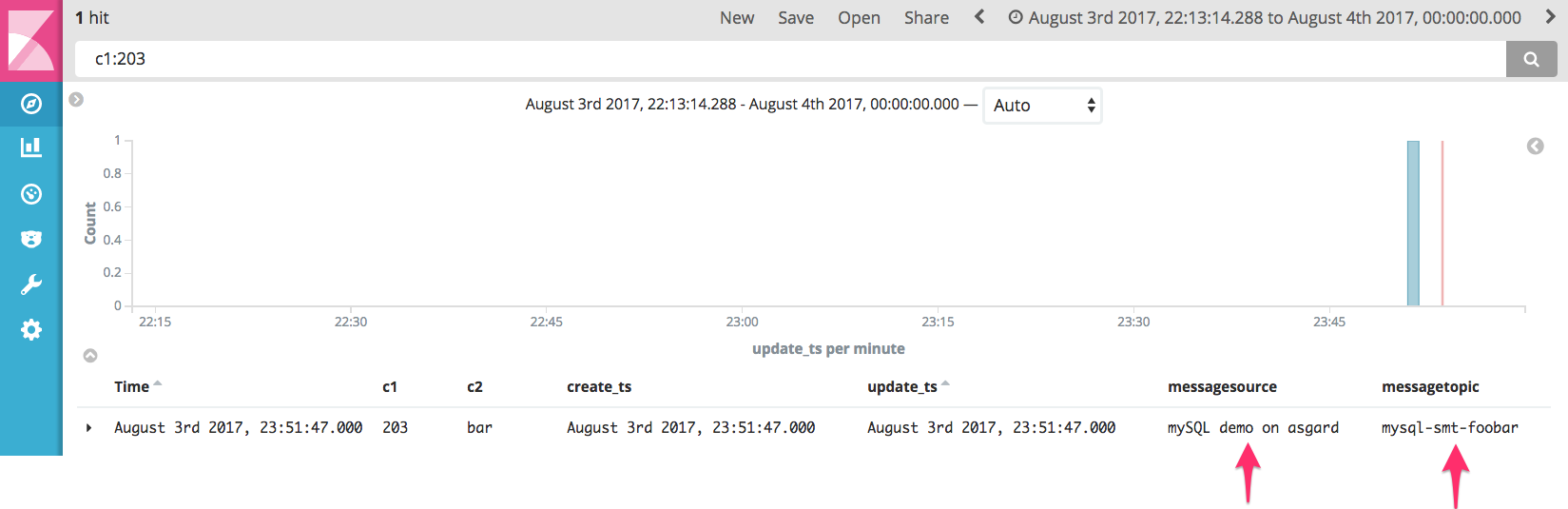

The Simplest Useful Kafka Connect Data Pipeline in the World…or Thereabouts – Part 3

We saw in the earlier articles (part 1, part 2) in this series how to use the Kafka Connect API to build out a very simple, but powerful and scalable, streaming […]

Publishing with Apache Kafka at The New York Times

At The New York Times we have a number of different systems that are used for producing content. We have several Content Management Systems, and we use third-party data and […]